...

WCS allows configuring the camera and the microphone from a browser. Let's see how this can be done and what parameters you can adjust when an audio and video stream is captured. We use the Media Devices web application as an example:

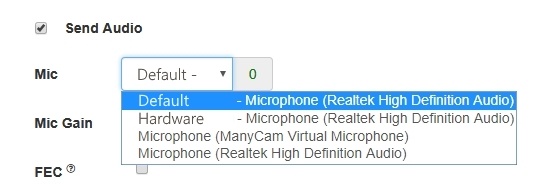

Microphone settings

1. Selecting the microphone from the list

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.getMediaDevices(null, true, MEDIA_DEVICE_KIND.INPUT).then(function (list) {

list.audio.forEach(function (device) {

...

});

...

}).catch(function (error) {

$("#notifyFlash").text("Failed to get media devices");

}); |

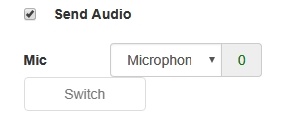

2. Microphone switching while stream is publishing

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

$("#switchMicBtn").click(function (){

publishStreamstream.switchMic();

.then(function(id) {

} $('#audioInput option:selected').prop('disabledselected', !($('#sendAudio').is(':checked'))); |

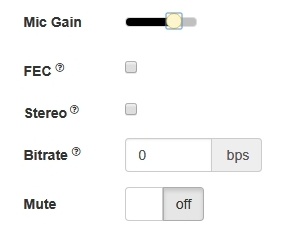

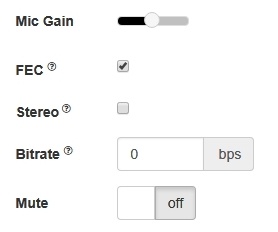

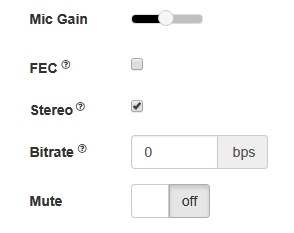

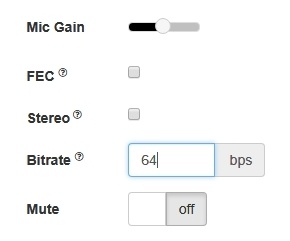

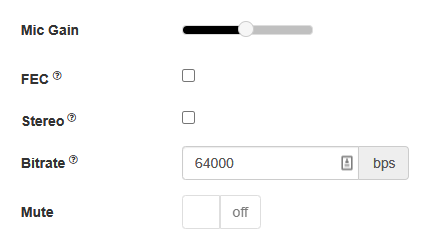

3. Adjusting microphone gain (works in Chrome only)

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

$("#micGainControl").slider(false); $("#audioInput option[value='"+ id +"']").prop('selected', true); }).catch(function(e) { range: "min", console.log("Error " min: 0,+ e); max: 100,}); }).prop('disabled', !($('#sendAudio').is(':checked'))); |

3. Adjusting microphone gain (works in Chrome only)

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

value: currentGainValue, $("#micGainControl").slider({ steprange: 10"min", animatemin: true0, slidemax: function (event, ui) {100, value: currentGainValue, step: 10, animate: true, slide: function (event, ui) { currentGainValue = ui.value; if(previewStream) { publishStream.setMicrophoneGain(currentGainValue); } } }); |

4. Enabling error correction (for the Opus codec only)

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

if (constraints.audio) {

constraints.audio = {

deviceId: $('#audioInput').val()

};

if ($("#fec").is(':checked'))

constraints.audio.fec = $("#fec").is(':checked');

...

} |

5. Setting stereo/mono mode.

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

if (constraints.audio) {

constraints.audio = {

deviceId: $('#audioInput').val()

};

...

if ($("#sendStereoAudio").is(':checked'))

constraints.audio.stereo = $("#sendStereoAudio").is(':checked');

...

} |

6. Setting audio bitrate in kbpsbps

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

if (constraints.audio) {

constraints.audio = {

deviceId: $('#audioInput').val()

};

...

if (parseInt($('#sendAudioBitrate').val()) > 0)

constraints.audio.bitrate = parseInt($('#sendAudioBitrate').val());

} |

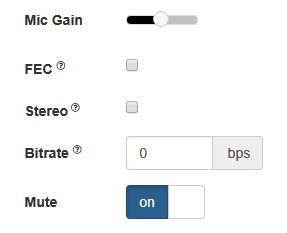

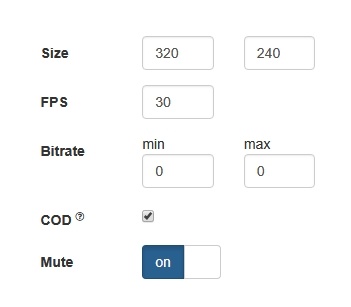

7. Turning off the microphone (mute).

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

if ($("#muteAudioToggle").is(":checked")) {

muteAudio();

}

|

Camera settings

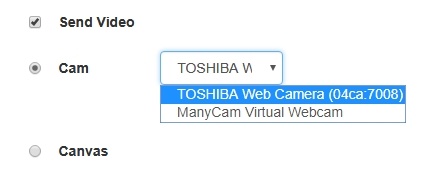

1. Camera selection

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.getMediaDevices(null, true, MEDIA_DEVICE_KIND.INPUT).then(function (list) {

...

list.video.forEach(function (device) {

...

});

}).catch(function (error) {

$("#notifyFlash").text("Failed to get media devices");

}); |

If audio devices access should not be requested while choosing a camera, getMediaDevices() function should be called with explicit constraints setting

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.getMediaDevices(null, true, nullMEDIA_DEVICE_KIND.INPUT, {video: true, audio: false}).then(function (list) { ... list.video.forEach(function (device) { ... }); }).catch(function (error) { $("#notifyFlash").text("Failed to get media devices"); }); |

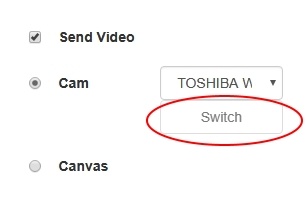

2. Switching cameras while stream is publishing

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

$("#switchBtn").text("Switch").off('click').click(function () {

publishStreamstream.switchCam();

.then(function(id) {

} $('#videoInput option:selected').prop('disabledselected', false);

$('#sendCanvasStream').is(':checked')); |

...

"#videoInput option[value='"+ id +"']").prop('selected', true);

}).catch(function(e) {

console.log("Error " + e);

});

}); |

Switching of the camera can be done "on the fly" during stream broadcasting. Here is how switching works:

- On PC cameras switch in the order they are defined in the device manager of the operating system.

- On Android, if Chrome is used, the default is the frontal camera. If Firefox is used, the default is the rear camera.

- On iOS in the Safari browser, by default the frontal camera is selected, but in the drop-down the rear camera is the first.

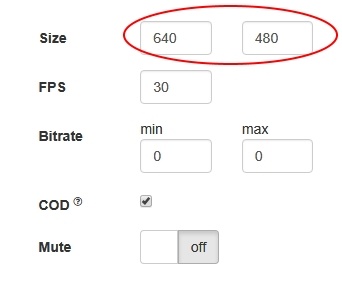

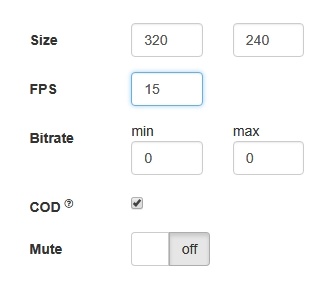

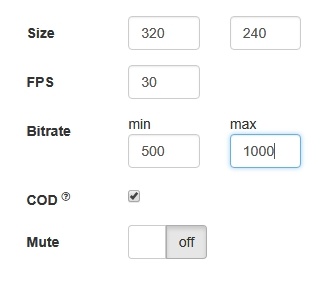

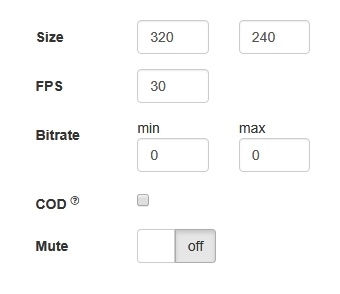

3. Specifying the resolution of the video

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

constraints.video = { deviceId: $('#videoInput').val(), width: parseInt($('#sendWidth').val()), height: parseInt($('#sendHeight').val()) }; if if (Browser.isSafariWebRTC() && Browser.isiOS() && Flashphoner.getMediaProviders()[0] === "WebRTC") { constraints.video.deviceId = {exact: $('#videoInput').val()}; } |

4. Setting FPS

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

if (constraints.video) { constraints..video.width = {min: . if (parseInt($('#sendWidth#fps').val()), max: 640};> 0) constraints.video.heightframeRate = {min: parseInt($('#sendHeight#fps').val()), max: 480}; } |

4. Setting FPS

...

;

} |

5.Setting video bitrate in kbps

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

if (constraints.video) { if (constraints.customStream) { ... } else { ... if (parseInt($('#fps#sendVideoMinBitrate').val()) > 0) constraints.video.minBitrate = parseInt($('#sendVideoMinBitrate').val()); if (parseInt($('#sendVideoMaxBitrate').val()) > 0) constraints.video.frameRatemaxBitrate = parseInt($('#fps#sendVideoMaxBitrate').val()); }... } |

56. Setting video bitrate in kbpsCPU Overuse Detection

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

if (constraints.video(!$("#cpuOveruseDetection").is(':checked')) { if (constraints.customStream)mediaConnectionConstraints = { ... "mandatory": { } else { ...googCpuOveruseDetection: false if (parseInt($('#sendVideoMinBitrate').val()) > 0)} } constraints.video.minBitrate = parseInt($('#sendVideoMinBitrate').val()); } |

7. Turning off the camera (mute)

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

if (parseInt($('#sendVideoMaxBitrate'"#muteVideoToggle").valis(":checked")) > 0){ constraints.video.maxBitrate = parseInt($('#sendVideoMaxBitrate').val())muteVideo(); ... } } |

6. Setting CPU Overuse Detection

...

} |

Testing camera and microhpone capturing locally

Local camera and microphone test is intended to check capturing in browser without publishing stream to server.

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

if (!$("#cpuOveruseDetection").is(':checked'))function startTest() { Flashphoner.getMediaAccess(getConstraints(), mediaConnectionConstraints = localVideo).then(function (disp) { "mandatory":$("#testBtn").text("Release").off('click').click(function () { $(this).prop('disabled', true); googCpuOveruseDetection: false stopTest(); }).prop('disabled', false); } window.AudioContext = window.AudioContext || } |

7. Turning off the camera (mute)

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

window.webkitAudioContext; if ($("#muteVideoToggle").is(":checked"))Flashphoner.getMediaProviders()[0] == "WebRTC" && window.AudioContext) { for muteVideo(); (i = 0; i < localVideo.children.length; i++) { } |

Testing camera and microhpone capturing locally

Local camera and microphone test is intended to check capturing in browser without publishing stream to server.

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

function startTest() { if (Browser.isSafariWebRTC()localVideo.children[i] && localVideo.children[i].id.indexOf("-LOCAL_CACHED_VIDEO") != -1) { Flashphoner.playFirstVideo(localVideo, true); var stream Flashphoner.playFirstVideo(remoteVideo, false); = localVideo.children[i].srcObject; } Flashphoner.getMediaAccess(getConstraints(), localVideo).then(function (disp) { audioContextForTest = new $AudioContext("#testBtn").text("Release").off('click').click(function () { ); $(this).prop('disabled', true var microphone = audioContextForTest.createMediaStreamSource(stream); stopTest(); var javascriptNode = })audioContextForTest.prop('disabled'createScriptProcessor(1024, 1, false1); window.AudioContext = window.AudioContext || window.webkitAudioContext; if (Flashphoner.getMediaProviders()[0] == "WebRTC" && window.AudioContext) { microphone.connect(javascriptNode); for (i = 0; i < localVideo.children.length; i++) { javascriptNode.connect(audioContextForTest.destination); if (localVideo.children[i] && localVideo.children[i].id.indexOf("-LOCAL_CACHED_VIDEO") != -1 javascriptNode.onaudioprocess = function (event) { var streaminpt_L = localVideo.children[i].srcObject; event.inputBuffer.getChannelData(0); audioContextForTest = new AudioContext(); var microphonesum_L = audioContextForTest.createMediaStreamSource(stream)0.0; var javascriptNode = audioContextForTest.createScriptProcessor(1024, 1, 1); for (var i = 0; i < inpt_L.length; ++i) { microphone.connect(javascriptNode); sum_L += inpt_L[i] javascriptNode.connect(audioContextForTest.destination)* inpt_L[i]; javascriptNode.onaudioprocess = function (event) {} $("#micLevel").text(Math.floor(Math.sqrt(sum_L / inpt_L.length) * 100)); } } } } else if (Flashphoner.getMediaProviders()[0] == "Flash") { micLevelInterval = setInterval(function () { $("#micLevel").text(disp.children[0].getMicrophoneLevel()); }, 500); } testStarted = true; }).catch(function (error) { $("#testBtn").prop('disabled', false); testStarted = false; }); drawSquare(); } |

SDP parameters replacing

When publishing stream, there is a possibility to replace SDP parameters. In 'SDP replace' field string template is set for search for the parameter to replace, and in 'with' field new parameter value is set.

...

To replace SDP parameters, a callback function is used that should be set on stream creation in sdpHook option of createStream() method:

stream creation code

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStream previewStream = session.createStream({ name: streamName, name: streamName, display: localVideo, displaycacheLocalResources: remoteVideotrue, constraints: constraints, mediaConnectionConstraints: mediaConnectionConstraints, sdpHook: rewriteSdp, ... }) |

rewriteSdp function code

| Code Block | ||||

|---|---|---|---|---|

| ||||

function rewriteSdp(sdp) {

var sdpStringFind = $("#sdpStringFind").val().replace('\\r\\n','\r\n');

var sdpStringReplace = $("#sdpStringReplace").val().replace('\\r\\n','\r\n');

if (sdpStringFind != 0 && sdpStringReplace != 0) {

var newSDP = sdp.sdpString.toString();

newSDP = newSDP.replace(new RegExp(sdpStringFind, sdpStringReplace"g"), sdpStringReplace);

return newSDP;

}

return sdp.sdpString;

} |

Rising up the bitrate of video stream published in Chrome browser

SDP parameters replacement allows to rise video streeam published bitrate. To do this, SDP parameter 'a' must be replaced by this template when publishing H264 stream:

...

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStream = session.createStream({

...

stripCodecs: ["h264",H264, "flv", "mpv"]

}).on(STREAM_STATUS.PUBLISHING, function (publishStream) {

...

});

publishStream.publish(); |

Such a capability is handy when you need to find some workaround for bugs of a browser or if it conflicts with the given codec. For example, if H.264 does not work in a browser, you can turn it off and switch to VP8 when working via WebRTC.

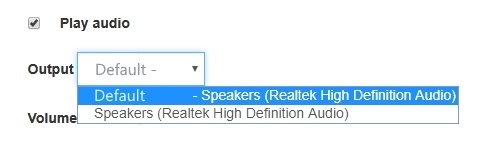

Sound device selection

...

Sound output device can be selected (and switched "on the fly") while stream is playing in Chrome browserand MS Edge browsers.

code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.getMediaDevices(null, true, MEDIA_DEVICE_KIND.OUTPUT).then(function (list) {

list.audio.forEach(function (device) {

...

});

}).catch(function (error) {

$('#audioOutputForm').remove();

}); |

Note that Firefox and Safari browsers always return empty output devices list, therefore sound device setection does not supported for these browsers

WebRTC statistics displaying

...

1. Statistics displaying while stream is published

stream.getStats() code:

| Code Block | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| publishStream.getStats(function (stats) {

| ||||||||

publishStream.getStats(function (stats) {

if (stats && stats.outboundStream) {

if (stats.outboundStream.video) {

showStat(stats.outboundStream.video, "outVideoStat");

let vBitrate = (stats.outboundStream.video.bytesSent - videoBytesSent) * 8;

if ($('#outVideoStatBitrate').length == 0) {

let html = "<div>Bitrate: " + "<span id='outVideoStatBitrate' style='font-weight: normal'>" + vBitrate + "</span>" + "</div>";

$("#outVideoStat").append(html);

} else {

$('#outVideoStatBitrate').text(vBitrate);

}

videoBytesSent = stats.outboundStream.video.bytesSent;

...

}

if (stats.outboundStream.audio) {

showStat(stats.outboundStream.audio, "outAudioStat");

let aBitrate = (stats.outboundStream.audio.bytesSent - audioBytesSent) * 8;

if ($('#outAudioStatBitrate').length == 0) {

let html = "<div>Bitrate: " + "<span id='outAudioStatBitrate' style='font-weight: normal'>" + aBitrate + "</span>" + "</div>";

$("#outAudioStat").append(html);

} else {

$('#outAudioStatBitrate').text(aBitrate);

}

audioBytesSent = stats.outboundStream.audio.bytesSent;

}

}

...

}); |

2. Statistics displaying while stream is played

stream.getStats() code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

previewStream.getStats(function (stats) { if (stats && stats.inboundStream) { if (stats.inboundStream.video) { showStat(stats.inboundStream.video, "inVideoStat"); let vBitrate = (stats.inboundStream.video.bytesReceived - videoBytesReceived) * 8; if ($('#inVideoStatBitrate').length == 0) { let html = "<div>Bitrate: " + "<span id='inVideoStatBitrate' style='font-weight: normal'>" + vBitrate + "</span>" + "</div>"; if (stats && stats.outboundStream) { if(stats.outboundStream.videoStats) {$("#inVideoStat").append(html); $('#outVideoStatBytesSent').text(stats.outboundStream.videoStats.bytesSent); } else { $('#outVideoStatPacketsSent').text(stats.outboundStream.videoStats.packetsSent); $('#outVideoStatFramesEncoded#inVideoStatBitrate').text(stats.outboundStream.videoStats.framesEncodedvBitrate); } else { } ... videoBytesReceived } = stats.inboundStream.video.bytesReceived; if(stats.outboundStream.audioStats) { $('#outAudioStatBytesSent').text(stats.outboundStream.audioStats.bytesSent); $('#outAudioStatPacketsSent').text(stats.outboundStream.audioStats.packetsSent);} } else { if (stats.inboundStream.audio) { ... showStat(stats.inboundStream.audio, "inAudioStat"); } } }); |

2. Statistics displaying while stream is played

stream.getStats() code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

previewStream.getStats(functionlet aBitrate = (stats.inboundStream.audio.bytesReceived - audioBytesReceived) * { 8; if (stats && stats.inboundStream$('#inAudioStatBitrate').length == 0) { if(stats.inboundStream.videoStats) { let html = "<div style='font-weight: bold'>Bitrate: " + "<span $(id='#inVideoStatBytesReceived').text(stats.inboundStream.videoStats.bytesReceived); inAudioStatBitrate' style='font-weight: normal'>" + aBitrate + "</span>" + "</div>"; $('#inVideoStatPacketsReceived').text(stats.inboundStream.videoStats.packetsReceived); $('#inVideoStatFramesDecoded'"#inAudioStat").text(stats.inboundStream.videoStats.framesDecodedappend(html); } else { } else { ... } $('#inAudioStatBitrate').text(aBitrate); if(stats.inboundStream.audioStats) { } $('#inAudioStatBytesReceived').text(stats.inboundStream.audioStats.bytesReceived); audioBytesReceived $('#inAudioStatPacketsReceived').text(= stats.inboundStream.audioStatsaudio.packetsReceived)bytesReceived; } else {} ... } } }); |

Picture parameters management

When video stream is published, it is possible to manage picture resolution and frame rate with constraints.

...

1. While streaming from chosen microphone and camera, when switch 'Screen share' is set to 'on' switchToScreen functioun will be invoked

stream.switchToScreen code

| Code Block | ||||

|---|---|---|---|---|

| ||||

function switchToScreen() {

if (publishStream) {

$('#switchBtn').prop('disabled', true);

$('#videoInput').prop('disabled', true);

publishStream.switchToScreen($('#mediaSource').val()).catch(function () {

$("#screenShareToggle").removeAttr("checked");

$('#switchBtn').prop('disabled', false);

$('#videoInput').prop('disabled', false);

});

}

} |

...

4. To revert back web camera streaming switchToCam function is invoked

stream.switchToCam code

| Code Block | ||||

|---|---|---|---|---|

| ||||

function switchToCam() {

if (publishStream) {

publishStream.switchToCam();

$('#switchBtn').prop('disabled', false);

$('#videoInput').prop('disabled', false);

}

} |

...