| Table of Contents |

|---|

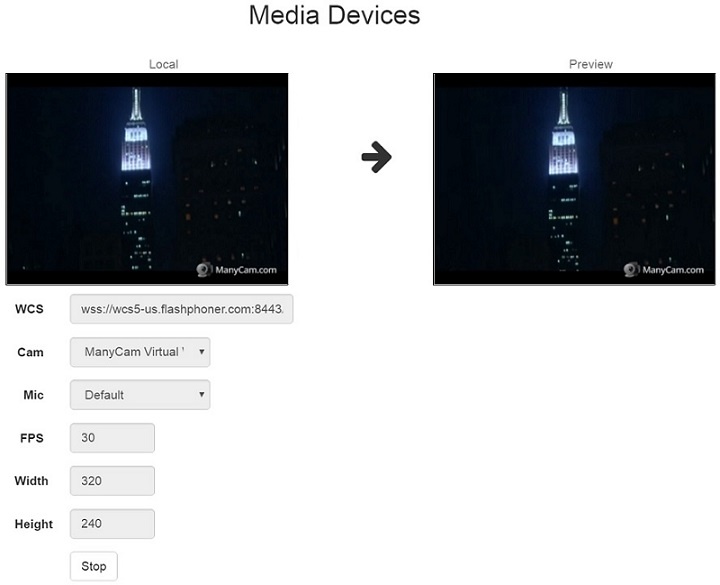

Example of streamer with access to media devices

This streamer can be used to publish the following types of or playback WebRTC streams on Web Call Server

- WebRTC

- RTMFP

- RTMP

and allows to select media devices and parameters for the published video

...

On the screenshot below a stream is being published from the client.

Two videos video elements are played displayed on the page

- 'Local' - video from the camera

- 'PreviewPlayer' - the video as received from the server

Code of the example

The path to the source code of the example on WCS server is:

...

Here host is the address of the WCS server.

...

Analyzing the code

To analyze the code, let's take the version of file manager.js whith hash ecbadc3, which is available here and and can be downloaded with corresponding build 2.0.5.28.2747212.

1. Initialization of the API.

Flashphoner.init() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.init({ flashMediaProviderSwfLocationscreenSharingExtensionId: '../../../../media-provider.swf'extensionId, mediaProvidersReadyCallback: function (mediaProviders) { //hide remote video if current media provider is Flash if (mediaProviders[0] == "Flash"if (Flashphoner.isUsingTemasys()) { $("#fecForm#audioInputForm").hide(); $("#stereoForm#videoInputForm").hide(); $("#sendAudioBitrateForm").hide();} } $("#cpuOveruseDetectionForm").hide(); }) |

2. List available input media devices.

Flashphoner.getMediaDevices() code

When input media devices are listed, drop-down lists of microphones and cameras on client page are filled.

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.getMediaDevices(null, true).then(function (list) { } list.audio.forEach(function (device) { if (Flashphoner.isUsingTemasys()) {... }); $("#audioInputForm").hide();list.video.forEach(function (device) { ... $("#videoInputForm").hide(}); ... }).catch(function }(error) { } $("#notifyFlash").text("Failed to get media devices"); }); |

23. List available output media devices.

Flashphoner.getMediaDevices() код code

When output media devices are listed, drop-down lists of spakers and headphones on client page are filled.

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.getMediaDevices(null, true, MEDIA_DEVICE_KIND.OUTPUT).then(function (list) { list.audio.forEach(function (device) { ... }); list.video.forEach... }).catch(function (deviceerror) { $("#notifyFlash").text("Failed to get ... }); ... }).catch(function (error) { $("#notifyFlash").text("Failed to get media devices")media devices"); }); |

34. Get audio and video publishing constraints from client page

getConstraints() code

Publishing sources:

- camera (sendVideo)

- microphone (sendAudio)

- HTML5 Canvas (sendCanvasStream)

| Code Block | ||||

|---|---|---|---|---|

| Code Block | ||||

| ||||

constraints = {

audio: $("#sendAudio").is(':checked'),

video: $("#sendVideo").is(':checked'),

customStream: $("#sendCanvasStream").is(':checked')

};

|

Audio constraints:

- microphone choise (deviceId)

- error correction for Opus codec (fec)

- stereo mode (stereo)

- audio bitrate (bitrate)

...

| Code Block | ||||||

|---|---|---|---|---|---|---|

| constraints.video = {

| |||||

constraints.video = {

deviceId: {exact: $('#videoInput').val()},

width: parseInt($('#sendWidth').val()),

height: parseInt($('#sendHeight').val())

};

if (Browser.isSafariWebRTC() && Browser.isiOS() && Flashphoner.getMediaProviders()[0] === "WebRTC") {

constraints.video.deviceId = {exact: $('#videoInput').val()};

}

if (parseInt($('#sendVideoMinBitrate').val()) > 0)

constraints.video.minBitrate = parseInt($('#sendVideoMinBitrate').val());

if (parseInt($('#sendVideoMaxBitrate').val()) > 0)

constraints.video.maxBitrate = parseInt($('#sendVideoMaxBitrate').val());

if (parseInt($('#fps').val()) > 0)

constraints.video.frameRate = parseInt($('#fps').val());

|

5. Get access to media devices for local test

Flashphoner.getMediaAccess() code

Audio and video constraints and <div>-element to display captured video are passed to the method.

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.getMediaAccess(getConstraints(), localVideo).then(function (disp) {

$("#testBtn").text("Release").off('click').click(function () {

$(this).prop('disabled', true);

stopTest();

}).prop('disabled', false);

...

testStarted = true;

}).catch(function (error) {

$("#testBtn").prop('disabled', false);

testStarted = false;

}); |

6. Connecting to the server

Flashphoner.createSession() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.createSession({urlServer: url, timeout: tm}).on(SESSION_STATUS.ESTABLISHED, function (session) {

...

}).on(SESSION_STATUS.DISCONNECTED, function () {

...

}).on(SESSION_STATUS.FAILED, function () {

...

}); |

7. Receiving the event confirming successful connection

ConnectionStatusEvent ESTABLISHED code

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.createSession({urlServer: url, timeout: tm}).on(SESSION_STATUS.ESTABLISHED, function (session) {

setStatus("#connectStatus", session.status());

onConnected(session);

...

}); |

8. Stream publishing

session.createStream(), publishStream.publish() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStream = session.createStream({

name: streamName,

display: localVideo,

cacheLocalResources: true,

constraints: constraints,

mediaConnectionConstraints: mediaConnectionConstraints,

sdpHook: rewriteSdp,

transport: transportInput,

cvoExtension: cvo,

stripCodecs: strippedCodecs,

videoContentHint: contentHint

...

});

publishStream.publish(); |

9. Receiving the event confirming successful streaming

StreamStatusEvent PUBLISHING code

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStream = session.createStream({

...

}).on(STREAM_STATUS.PUBLISHING, function (stream) {

$("#testBtn").prop('disabled', true);

var video = document.getElementById(stream.id());

//resize local if resolution is available

if (video.videoWidth > 0 && video.videoHeight > 0) {

resizeLocalVideo({target: video});

}

enablePublishToggles(true);

if ($("#muteVideoToggle").is(":checked")) {

muteVideo();

}

if ($("#muteAudioToggle").is(":checked")) {

muteAudio();

}

//remove resize listener in case this video was cached earlier

video.removeEventListener('resize', resizeLocalVideo);

video.addEventListener('resize', resizeLocalVideo);

publishStream.setMicrophoneGain(currentGainValue);

setStatus("#publishStatus", STREAM_STATUS.PUBLISHING);

onPublishing(stream);

}).on(STREAM_STATUS.UNPUBLISHED, function () {

...

}).on(STREAM_STATUS.FAILED, function () {

...

});

publishStream.publish(); |

10. Stream playback

session.createStream(), previewStream.play() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

previewStream = session.createStream({

name: streamName,

display: remoteVideo,

constraints: constraints,

transport: transportOutput,

stripCodecs: strippedCodecs

...

});

previewStream.play(); |

11. Receiving the event confirming successful playback

StreamStatusEvent PLAYING code

| Code Block | ||||

|---|---|---|---|---|

| ||||

previewStream = session.createStream({

...

}).on(STREAM_STATUS.PLAYING, function (stream) {

playConnectionQualityStat.connectionQualityUpdateTimestamp = new Date().valueOf();

setStatus("#playStatus", stream.status());

onPlaying(stream);

document.getElementById(stream.id()).addEventListener('resize', function (event) {

$("#playResolution").text(event.target.videoWidth + "x" + event.target.videoHeight);

resizeVideo(event.target);

});

//wait for incoming stream

if (Flashphoner.getMediaProviders()[0] == "WebRTC") {

setTimeout(function () {

if(Browser.isChrome()) {

detectSpeechChrome(stream);

} else {

detectSpeech(stream);

}

}, 3000);

}

...

});

previewStream.play(); |

12. Stop stream playback

stream.stop() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

$("#playBtn").text("Stop").off('click').click(function () {

$(this).prop('disabled', true);

stream.stop();

}).prop('disabled', false); |

13. Receiving the event confirming successful playback stop

StreamStatusEvent STOPPED code

| Code Block | ||||

|---|---|---|---|---|

| ||||

previewStream = session.createStream({

...

}).on(STREAM_STATUS.STOPPED, function () {

setStatus("#playStatus", STREAM_STATUS.STOPPED);

onStopped();

...

});

previewStream.play(); |

14. Stop stream publishing

stream.stop() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

$("#publishBtn").text("Stop").off('click').click(function () {

$(this).prop('disabled', true);

stream.stop();

}).prop('disabled', false); |

15. Receiving the event confirming successful publishsing stop

StreamStatusEvent UNPUBLISHED code

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStream = session.createStream({

...

}).on(STREAM_STATUS.UNPUBLISHED, function () {

setStatus("#publishStatus", STREAM_STATUS.UNPUBLISHED);

onUnpublished();

...

});

publishStream.publish(); |

16. Mute publisher audio

stream.muteAudio() code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

function muteAudio() {

if (publishStream) {

publishStream.muteAudio();

}

} |

17. Mute publisher video

stream.muteVideo() code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

function muteVideo() {

if (publishStream) {

publishStream.muteVideo();

}

} |

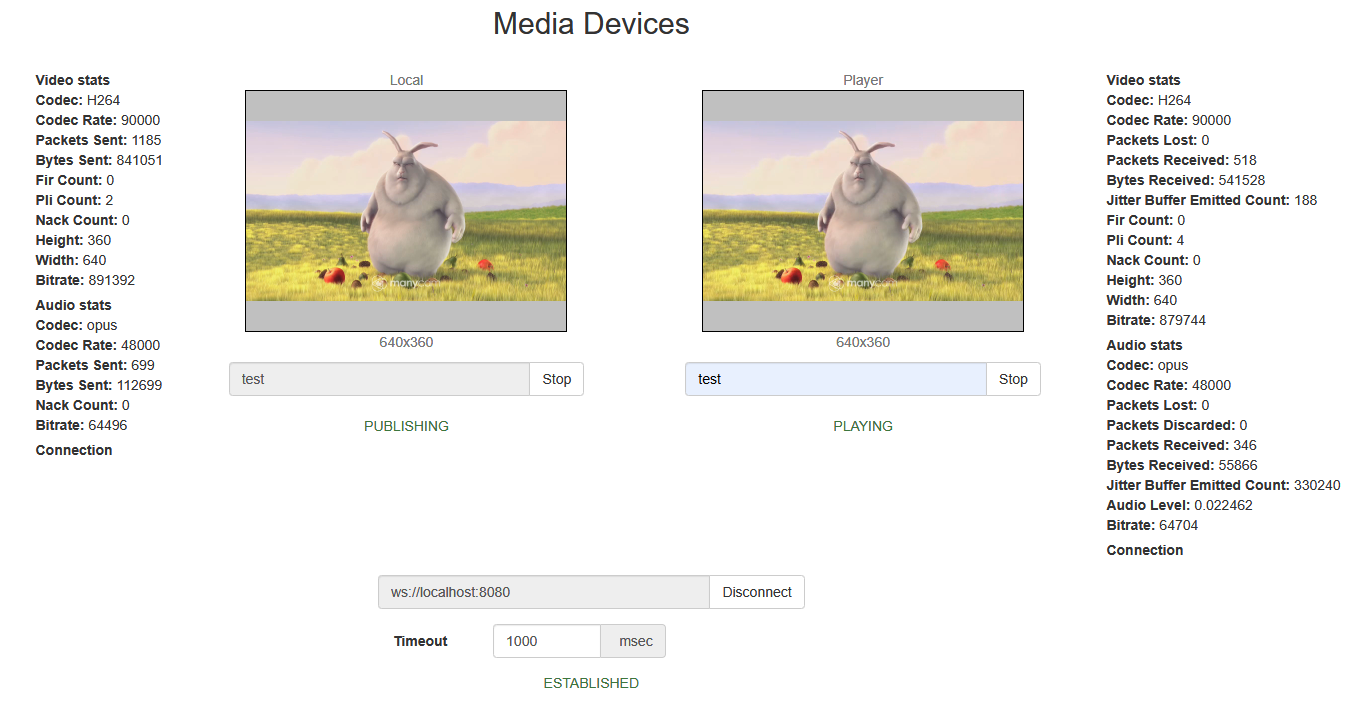

18. Show WebRTC stream publishing statistics

stream.getStats() code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStream.getStats(function (stats) { if (stats && stats.outboundStream) { if (stats.outboundStream.video) { showStat(stats.outboundStream.video, "outVideoStat"); let vBitrate = (stats.outboundStream.video.bytesSent - videoBytesSent) * 8; if ($('#outVideoStatBitrate').length == 0) { let html = "<div>Bitrate: " + "<span id='outVideoStatBitrate' style='font-weight: normal'>" + vBitrate + "</span>" + "</div>"; deviceId: {exact: $('#videoInput'"#outVideoStat").valappend(html)}, ; } else { width: parseInt($('#sendWidth#outVideoStatBitrate').valtext(vBitrate)),; height: parseInt($('#sendHeight').val()) } }; videoBytesSent if (Browser.isSafariWebRTC() && Browser.isiOS() && Flashphoner.getMediaProviders()[0] === "WebRTC") { = stats.outboundStream.video.bytesSent; constraints..video.width = {min: parseInt($('#sendWidth').val()), max: 640}; } constraints.video.height = {min: parseInt($('#sendHeight').val()), max: 480}; if (stats.outboundStream.audio) { } if (parseInt($('#sendVideoMinBitrate').val()) > 0) showStat(stats.outboundStream.audio, "outAudioStat"); let constraints.video.minBitrateaBitrate = parseInt($('#sendVideoMinBitrate').val()); (stats.outboundStream.audio.bytesSent - audioBytesSent) * 8; if (parseInt($('#sendVideoMaxBitrate#outAudioStatBitrate').val()) >).length == 0) { constraints.video.maxBitrate = parseInt($('#sendVideoMaxBitrate').val()); let html = "<div>Bitrate: " + if (parseInt($('#fps').val()) > 0) "<span id='outAudioStatBitrate' style='font-weight: normal'>" + aBitrate + "</span>" + "</div>"; constraints.video.frameRate = parseInt($('#fps').val()); |

4. Get access to media devices for local test

Flashphoner.getMediaAccess() code

Audio and video constraints and <div>-element to display captured video are passed to the method.

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.getMediaAccess(getConstraints(), localVideo).then(function (disp) { $("#outAudioStat").append(html); } else { $("#testBtn").text("Release").off('click'#outAudioStatBitrate').click(function text(aBitrate) {; $(this).prop('disabled', true); } stopTest(); }).prop('disabled', false); audioBytesSent = stats.outboundStream.audio.bytesSent; testStarted = true; }).catch(function (error) { $("#testBtn").prop('disabled', false); } testStarted = false; ... }); |

5. Connecting to the server

Flashphoner.createSession() code19. Show WebRTC stream playback statistics

stream.getStats() code:

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.createSession({urlServer: url}).on(SESSION_STATUS.ESTABLISHED, function (session previewStream.getStats(function (stats) { if (stats && stats.inboundStream) { //session connected, start streaming if startStreaming(session);stats.inboundStream.video) { }).on(SESSION_STATUS.DISCONNECTED, function () { setStatus(SESSION_STATUS.DISCONNECTEDshowStat(stats.inboundStream.video, "inVideoStat"); onStopped(); }).on(SESSION_STATUS.FAILED, function () { let vBitrate = setStatus(SESSION_STATUS.FAILED); (stats.inboundStream.video.bytesReceived - videoBytesReceived) * 8; onStopped(); }); |

6. Receiving the event confirming successful connection

ConnectionStatusEvent ESTABLISHED code

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.createSession({urlServer: url}).on(SESSION_STATUS.ESTABLISHED, function (sessionif ($('#inVideoStatBitrate').length == 0) { //session connected, start streaming let html = "<div>Bitrate: startStreaming(session); }).on(SESSION_STATUS.DISCONNECTED, function () { " + "<span id='inVideoStatBitrate' style='font-weight: normal'>" + vBitrate + "</span>" + "</div>"; ... }).on(SESSION_STATUS.FAILED, function () { ... }); |

7. Stream publishing

session.createStream(), publishStream.publish() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

$("#inVideoStat").append(html); publishStream = session.createStream({ } name:else streamName, { display: localVideo, cacheLocalResources: true, $('#inVideoStatBitrate').text(vBitrate); constraints: constraints, mediaConnectionConstraints: mediaConnectionConstraints} ... }); publishStream.publish(); |

8. Receiving the event confirming successful streaming

StreamStatusEvent PUBLISHING code

On receiving the event, the preview stream is created with session.createStream() method and play() is called to play it

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStreamvideoBytesReceived = session.createStream({stats.inboundStream.video.bytesReceived; name: streamName, display: localVideo, ... cacheLocalResources: true, } constraints: constraints, mediaConnectionConstraints: mediaConnectionConstraints }).on(STREAM_STATUS.PUBLISHING, function (publishStream if (stats.inboundStream.audio) { $("#testBtn").prop('disabled', true); var video = document.getElementById(publishStream.id())showStat(stats.inboundStream.audio, "inAudioStat"); //resize local if resolution is available let aBitrate if= (video.videoWidth > 0 && video.videoHeight > 0) { stats.inboundStream.audio.bytesReceived - audioBytesReceived) * 8; if resizeLocalVideo({target: video}); ($('#inAudioStatBitrate').length == 0) { } enableMuteToggles(true); if ($("#muteVideoToggle").is(":checked")) { muteVideo(); } let html = "<div style='font-weight: bold'>Bitrate: " + "<span id='inAudioStatBitrate' style='font-weight: normal'>" + aBitrate + "</span>" + "</div>"; if ($("#muteAudioToggle").is(":checked")) { muteAudio($("#inAudioStat").append(html); } //remove resize listener in case this video was} cachedelse earlier{ video.removeEventListener('resize', resizeLocalVideo); video.addEventListener('resize', resizeLocalVideo); setStatus(STREAM_STATUS.PUBLISHING); $('#inAudioStatBitrate').text(aBitrate); //play preview var constraints = {} audio: $("#playAudio").is(':checked'), audioBytesReceived video: $("#playVideo").is(':checked')= stats.inboundStream.audio.bytesReceived; }; } if (constraints.video) { constraints...video = { } width: (!$("#receiveDefaultSize").is(":checked")) ? parseInt($('#receiveWidth').val()) : 0, height: (!$("#receiveDefaultSize").is(":checked")) ? parseInt($('#receiveHeight').val()) : 0, bitrate: (!$("#receiveDefaultBitrate").is(":checked")) ? $("#receiveBitrate").val() : 0, quality: (!$("#receiveDefaultQuality").is(":checked")) ? $('#quality').val() : 0 }); |

20. Speech detection using ScriptProcessor interface (any browser except Chrome)

audioContext.createMediaStreamSource(), audioContext.createScriptProcessor() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

function detectSpeech(stream, level, latency) { var mediaStream = document.getElementById(stream.id()).srcObject; var source = audioContext.createMediaStreamSource(mediaStream); var processor = audioContext.createScriptProcessor(512); processor.onaudioprocess = handleAudio; processor.connect(audioContext.destination); processor.clipping = false; processor.lastClip = 0; // threshold processor.threshold = level || 0.10; processor.latency = latency || 750; processor.isSpeech = function () { if (!this.clipping) return }false; } if ((this.lastClip + this.latency) < previewStreamwindow.performance.now()) this.clipping = session.createStream({false; name: streamName,return this.clipping; }; display: remoteVideo, source.connect(processor); // Check speech every 500 ms speechIntervalID constraints: constraints = setInterval(function () { if ...(processor.isSpeech()) { }); previewStream.play($("#talking").css('background-color', 'green'); }).on(STREAM_STATUS.UNPUBLISHED, function () } else { ... }$("#talking").on(STREAM_STATUS.FAILED, function () {css('background-color', 'red'); ...} }, 500); publishStream.publish(); |

9. Preview stream playback stop

...

} |

Audio data handler code

| Code Block | ||||

|---|---|---|---|---|

| ||||

function handleAudio(event) { $("#publishBtn").text("Stop").off('click').click(function () { $(this).prop('disabled', true); previewStream.stop(); }).prop('disabled', false); |

10. Receiving the event confirming successful playback stop

StreamStatusEvent STOPPED code

| Code Block | ||||

|---|---|---|---|---|

| ||||

var buf = event.inputBuffer.getChannelData(0); var bufLength = buf.length; var x; for (var i = 0; i < bufLength; i++) { x = buf[i]; previewStream if (Math.abs(x) >= session.createStream(this.threshold) { this.clipping name: streamName,= true; display: remoteVideo,this.lastClip = window.performance.now(); } constraints: constraints }).on(STREAM_STATUS.PLAYING, function (previewStream} } |

21. Speech detection using incoming audio WebRTC statistics in Chrome browser

stream.getStats() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

function detectSpeechChrome(stream, level, latency) { statSpeechDetector.threshold = level || 0.010; statSpeechDetector... latency = latency || 750; }).on(STREAM_STATUS.STOPPED, function () {statSpeechDetector.clipping = false; statSpeechDetector.lastClip = 0; speechIntervalID publishStream.stop= setInterval(function(); { })stream.on(STREAM_STATUS.FAILED, function (getStats(function(stat) { ... let audioStats = stat.inboundStream.audio; }); previewStream.playif(); |

11. Streaming stop after preview playback stopped

publishStream.stop() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

!audioStats) { previewStream = session.createStream({ return; name: streamName, } display: remoteVideo, // Using audioLevel WebRTC stats parameter constraints: constraints if }).on(STREAM_STATUS.PLAYING, function (previewStream(audioStats.audioLevel >= statSpeechDetector.threshold) { ... }).on(STREAM_STATUS.STOPPED, function () {statSpeechDetector.clipping = true; publishStream.stop(); statSpeechDetector.lastClip = }).on(STREAM_STATUS.FAILED, function () {window.performance.now(); ...} }); if ((statSpeechDetector.lastClip + previewStreamstatSpeechDetector.play(); |

12. Receiving the event confirming successful streaming stop

StreamStatusEvent UNPUBLISHED код

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStream = session.createStream({ name: streamName,latency) < window.performance.now()) { display: localVideo, statSpeechDetector.clipping cacheLocalResources: true,= false; constraints: constraints, } mediaConnectionConstraints: mediaConnectionConstraints if }).on(STREAM_STATUS.PUBLISHING, function (publishStream(statSpeechDetector.clipping) { ... }$("#talking").on(STREAM_STATUS.UNPUBLISHED, function () { css('background-color', 'green'); setStatus(STREAM_STATUS.UNPUBLISHED); } else { //enable start button onStopped($("#talking").css('background-color', 'red'); }).on(STREAM_STATUS.FAILED, function () { } ... }); publishStream.publish(},500); } |