...

This example can be used to publish WebRTC stream from device screen and a separate audio stream for publishers voicewith system audio or microphone audio capturing. The example works with iOS SDK 2.6.82 and newer.

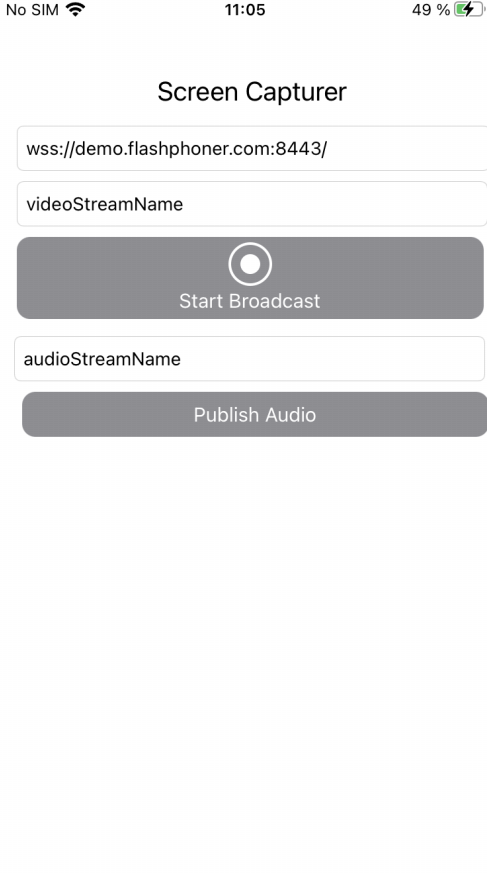

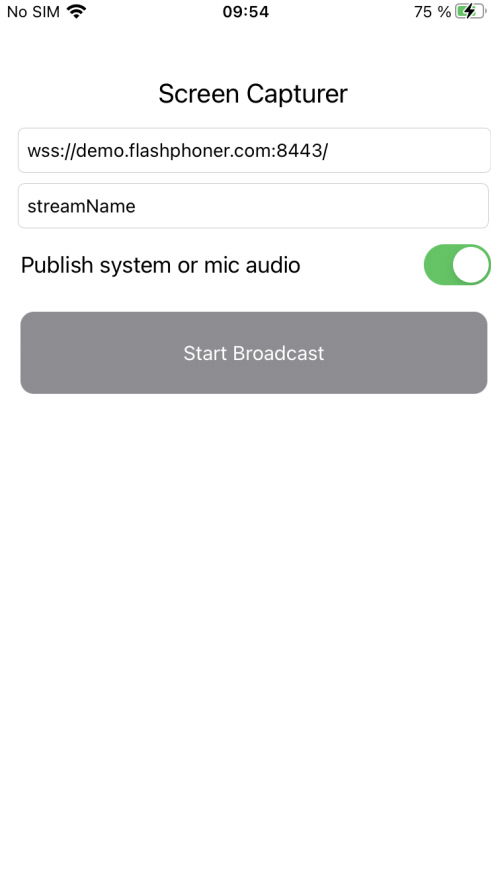

The main application view is shown below. Inputs:

- WCS Websocket URL

- screen video stream name to publish

- audio stream name to publish

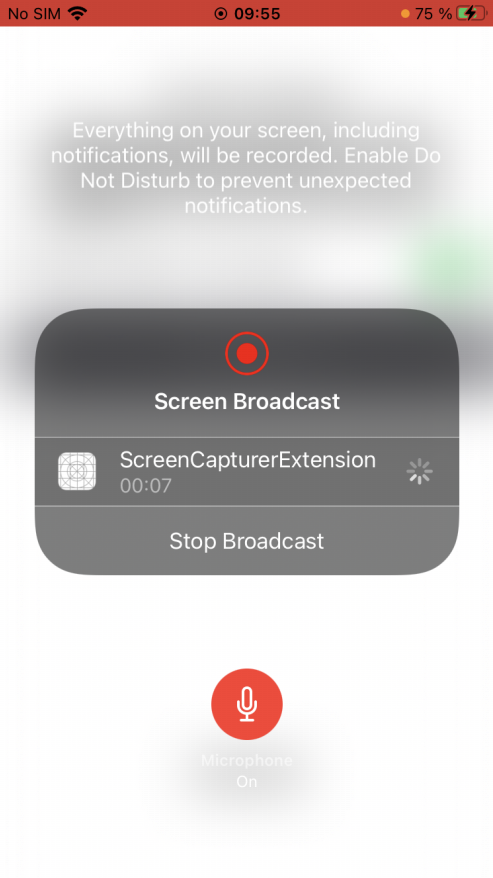

Application view when screen sharing is started

A special extension process is used to capture video from screen. This pocess works until device is locked or screen cpturing is stopped manually.

...

To analyze the code take ScreenCapturer example version which is available on GitHub.

Classes

- main application view class: ScreenCapturerViewController (implementation file ScreenCapturerViewController.swift)

- extension implementation class: ScreenCapturerExtensionHandler (implementation file ScreenCapturerExtensionHandler.swift)

1. Import API

| Code Block | ||||

|---|---|---|---|---|

| ||||

import FPWCSApi2Swift |

2. Session creation to publish audio

WCSSession, WCSSession.connect code

The following parameters are passed to WCSSession constructor:

...

Screen capturer extension parameters setup

UserDefaults.suiteName parameter must be equal to extension application group id

| Code Block | ||||

|---|---|---|---|---|

| ||||

@IBAction func publishAudioPressedbroadcastBtnPressed(_ sender: Any) { if (publishAudioButton.title(for: .normal) == "Publish Audio") { ... pickerView.showsMicrophoneButton = systemOrMicSwitch.isOn let optionsuserDefaults = FPWCSApi2SessionOptions(UserDefaults.init(suiteName: "group.com.flashphoner.ScreenCapturerSwift") userDefaults?.set(urlField.text, forKey: "wcsUrl") options.urlServer = self.urlField.textuserDefaults?.set(publishVideoName.text, forKey: "streamName") userDefaults?.set(systemOrMicSwitch.isOn, forKey: "useMic") options.appKey = "defaultApp" ... } |

3. Receiving screen capture parameters in extension code

| Code Block | ||||

|---|---|---|---|---|

| ||||

override func broadcastStarted(withSetupInfo setupInfo: [String do: NSObject]?) { ... let userDefaults = try session = WCSSession(options) UserDefaults.init(suiteName: "group.com.flashphoner.ScreenCapturerSwift") let wcsUrl = userDefaults?.string(forKey: "wcsUrl") } catchif { wcsUrl != self.wcsUrl || session?.getStatus() != .fpwcsSessionStatusEstablished { printsession?.disconnect(error) session = }nil } self... wcsUrl = wcsUrl ?? self.wcsUrl session?.connect() let streamName changeViewState(publishAudioButton, false= userDefaults?.string(forKey: "streamName") }self.streamName else= { streamName ?? self.streamName ... } } |

3. Audio publishing

WCSSession.createStream, WCSStream.publish code

The following parameters are passed to createStream method:

...

4. Screen capturer object setup to capture audio

FPWCSApi2.getAudioManager().useAudioModule code

| Code Block | ||||

|---|---|---|---|---|

| ||||

func onConnected(_ session:WCSSession) throws { let optionsuseMic = FPWCSApi2StreamOptions() options.name = publishAudioName.textuserDefaults?.bool(forKey: "useMic") capturer options.constraints = FPWCSApi2MediaConstraintsScreenRTCVideoCapturer(audiouseMic: true, video: false); do {useMic ?? true) publishStream = try session.createStream(options) FPWCSApi2.getAudioManager().useAudioModule(true) |

5. Session creation to publish screen stream

WCSSession, WCSSession.connect code

| Code Block | ||||

|---|---|---|---|---|

| ||||

if (session == }nil) catch { let options = printFPWCSApi2SessionOptions(error); options.urlServer = }self.wcsUrl ...options.appKey = "defaultApp" do { try publishStream?.publish( session = WCSSession(options) } catch { print(error); } } |

4. Screen capturer extension parameters setup

UserDefaults.suiteName parameter must be equal to extension application group id

| Code Block | ||||

|---|---|---|---|---|

| ||||

@objc func pickerAction() { //#WCS-3207 - Use suite name as group id in entitlements let userDefaults = UserDefaults.init(suiteName: "group.com.flashphoner.ScreenCapturerSwift") userDefaults?.set(urlField.text, forKey: "wcsUrl") userDefaultssession?.set(publishVideoName.text, forKey: "streamName"connect() } |

56. Screen capturer class setup

| Code Block | ||||

|---|---|---|---|---|

| ||||

fileprivate var capturer: ScreenRTCVideoCapturer = ScreenRTCVideoCapturer() |

6. Receiving screen capture parameters in extension code

codestream publishing

WCSSession.createStream, WCSStream.publish code

The following parameters are passed to createStream method:

- stream name to publish

- ScreenRTCVideoCapturer object to capture video from screen

| Code Block | ||||

|---|---|---|---|---|

| ||||

override func broadcastStartedonConnected(withSetupInfo_ setupInfo: [String : NSObject]?) { session:WCSSession) throws { let options = //#WCS-3207 - Use suite name as group id in entitlementsFPWCSApi2StreamOptions() options.name = streamName let userDefaultsoptions.constraints = UserDefaults.init(suiteName: "group.com.flashphoner.ScreenCapturerSwift") FPWCSApi2MediaConstraints(audio: false, videoCapturer: capturer); lettry wcsUrlpublishStream = userDefaults?session.string(forKey: "wcsUrl"createStream(options) if... wcsUrl != self.wcsUrl || session?.getStatus() != .fpwcsSessionStatusEstablished { try sessionpublishStream?.disconnectpublish() } |

7. ScreenRTCVideoCapturer class initialization

| Code Block | ||||

|---|---|---|---|---|

| ||||

fileprivate class ScreenRTCVideoCapturer: RTCVideoCapturer { let sessionkNanosecondsPerSecond = nil1000000000 var useMic: Bool = } self.wcsUrl = wcsUrl ?? self.wcsUrltrue; init(useMic: Bool) { let streamName = userDefaults?.string(forKey: "streamName"super.init() self.streamNameuseMic = streamName ?? self.streamNameuseMic } ... } |

7. Session creation to publish screen stream

WCSSession, WCSSession.connect 8. System audio capturing in extension code

FPWCSApi2.getAudioManager().getAudioModule().deliverRecordedData() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

func processSampleBuffer(_ sampleBuffer: CMSampleBuffer, ifwith (session == nilsampleBufferType: RPSampleBufferType) { switch let options = FPWCSApi2SessionOptions()sampleBufferType { options.urlServer = self.wcsUrl... case options.appKey = "defaultApp" RPSampleBufferType.audioApp: doif (!useMic) { try session = WCSSession(optionsFPWCSApi2.getAudioManager().getAudioModule().deliverRecordedData(sampleBuffer) } catch { print(error) } break ... session?.connect() } |

8. Screen stream publishing

WCSSession.createStream, WCSStream.publish code

The following parameters are passed to createStream method:

- stream name to publish

- ScreenRTCVideoCapturer object to capture video from screen

}

} |

9. Microphone audio capturing in extension code

FPWCSApi2.getAudioManager().getAudioModule().deliverRecordedData() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

func onConnectedprocessSampleBuffer(_ session:WCSSession) throws sampleBuffer: CMSampleBuffer, with sampleBufferType: RPSampleBufferType) { letswitch options = FPWCSApi2StreamOptions()sampleBufferType { options.name = streamName... case RPSampleBufferType.audioMic: options.constraints = FPWCSApi2MediaConstraints(audio: false, videoCapturer:if capturer(useMic); { try publishStream = session.createStream(options FPWCSApi2.getAudioManager().getAudioModule().deliverRecordedData(sampleBuffer) ... } break try publishStream?.publish()... } } |

Known limits

1. Music from iTunes will not play when system audio capturing is active.

2. ScreenCapturerSwift extension will receive a silence in sampleBuffer both from microphone and system audio if some other application uses the microphone. When microphone is released by other application, it is necessary to stop screen publishing and start it again to receive any audio.