...

Capturing a video stream from HTML5 Canvas and preparing for publishing

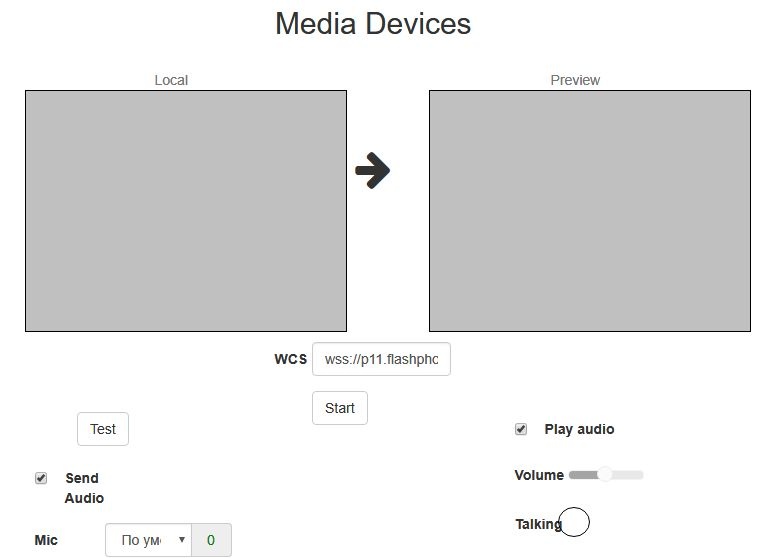

1. For the test we use the demo :

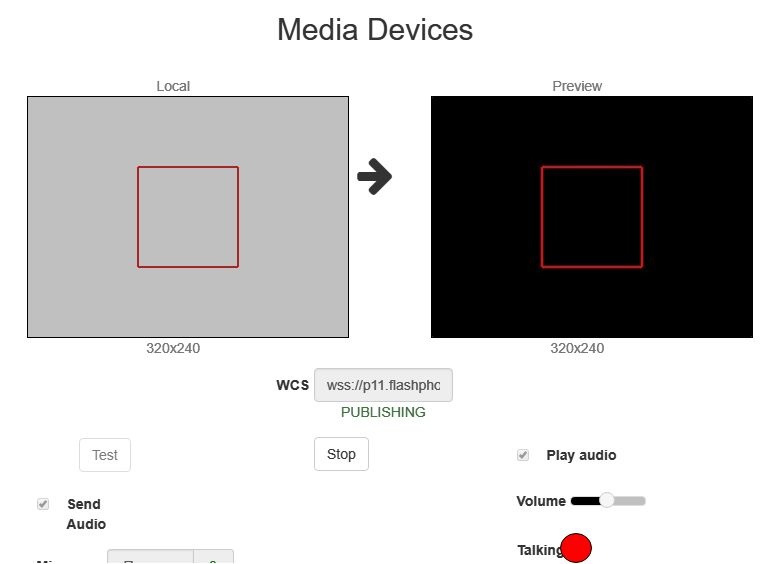

- WCS server

...

- demo.flashphoner.com

...

- Canvas Streaming web application in

...

- Chrome browser

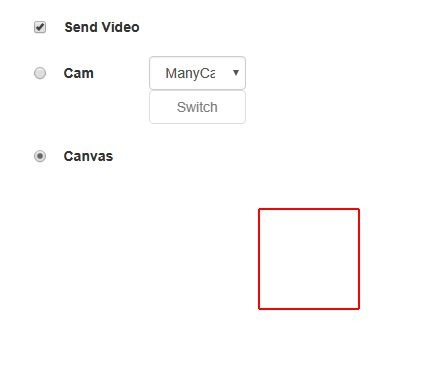

2. In the "Send Video" section select "Canvas"

3. Click the "Start" button. Broadcasting of the image on the HTML5 Canvas (red frame) starts:

4. Make sure the stream is sent to the server and the system operates normally in 2. Press "Start". This starts streaming from HTML5 Canvas on which test video fragment is played:

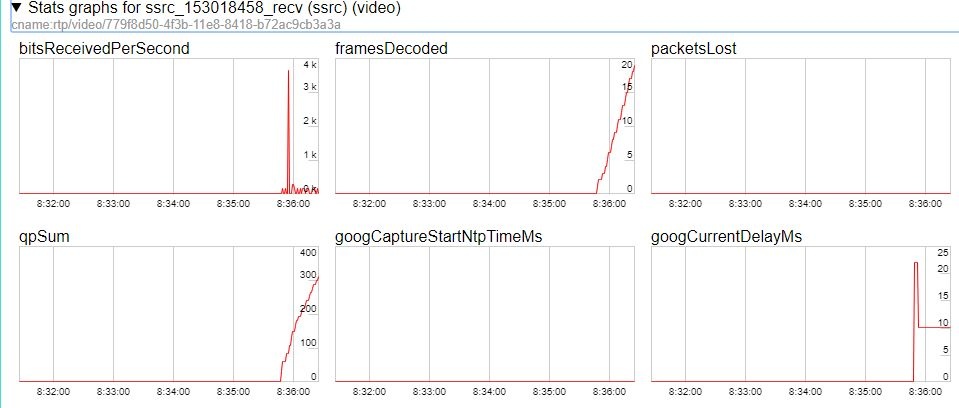

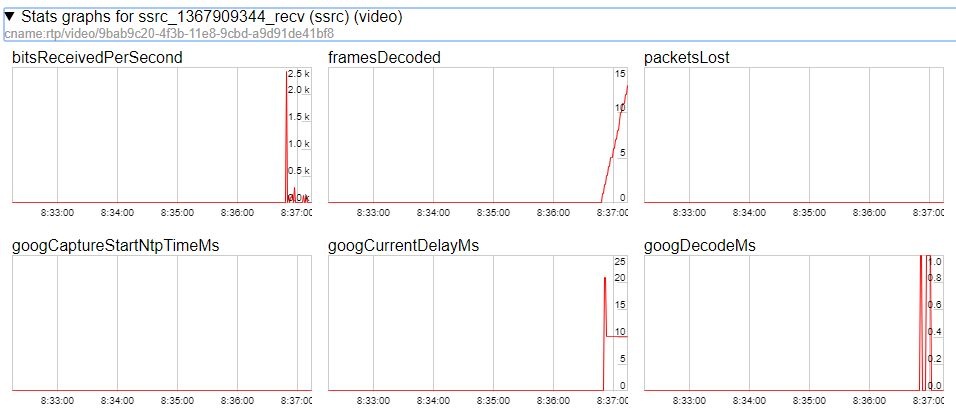

3. To make shure that stream goes to server, open chrome://webrtc-internals

5. Open Two Way Streaming in a new window, click Connect and specify the stream id, then click Play.

6. Playback diagrams in

4. Playback graphs chrome://webrtc-internals

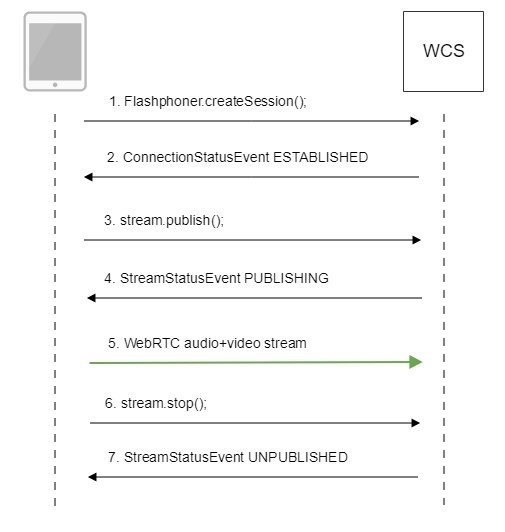

Call flow

Below is the call flow in the Media Devices Canvas Streaming example

mediacanvas_device_managerstreaming.html

managercanvas_streaming.js

1. Establishing a connection to the server.

Flashphoner.createSession(); code

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.createSession({urlServer: url}).on(SESSION_STATUS.ESTABLISHED, function(session){

//session connected, start streaming

startStreaming(session);

}).on(SESSION_STATUS.DISCONNECTED, function(){

setStatus(SESSION_STATUS.DISCONNECTED);

onStopped();

}).on(SESSION_STATUS.FAILED, function(){

setStatus(SESSION_STATUS.FAILED);

onStopped();

}); |

...

ConnectionStatusEvent ESTABLISHED code

| Code Block | ||||

|---|---|---|---|---|

| ||||

Flashphoner.createSession({urlServer: url}).on(SESSION_STATUS.ESTABLISHED, function(session){

//session connected, start streaming

startStreaming(session);

...

}); |

3. Configuring capturing from the 2.1. Set up and start HTML5 Canvas elementcapturing

getConstraints(); code

| Code Block | ||||

|---|---|---|---|---|

| ||||

function if (constraints.videogetConstraints() { var if (constraints.customStream) {constraints; constraints.customStream = canvas.captureStream(30var stream = createCanvasStream(); constraints = { constraints.video =audio: false;, } else {video: false, ... customStream: stream }; return constraints; } |

4. Publishing the stream.

stream.publish(); createCanvasStream():

set up video capturing from Canvas code

| Code Block | ||||

|---|---|---|---|---|

| ||||

var publishStreamcanvasContext = sessioncanvas.createStream({getContext("2d"); var canvasStream name: streamName, display: localVideo, cacheLocalResources: true, constraints: constraints, mediaConnectionConstraints: mediaConnectionConstraints }).on(STREAM_STATUS.PUBLISHING, function (publishStream) {= canvas.captureStream(30); mockVideoElement = ... }).on(STREAM_STATUS.UNPUBLISHED, function () {document.createElement("video"); mockVideoElement.src = '../. }).on(STREAM_STATUS.FAILED, function () {./dependencies/media/test_movie.mp4'; ... })mockVideoElement.loop = true; publishStream.publish(); |

5. Receiving from the server an event confirming successful publishing of the stream.

...

mockVideoElement.muted = true; |

draw on Canvas with 30 fps code

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStream = session.createStream(mockVideoElement.addEventListener("play", function () { name: streamName, display: localVideo,var $this = this; cacheLocalResources: true, constraints: constraints,(function loop() { mediaConnectionConstraints: mediaConnectionConstraints if }).on(STREAM_STATUS.PUBLISHING, function (publishStream(!$this.paused && !$this.ended) { $("#testBtn").prop('disabled', true); var video = document.getElementById(publishStream.id()); //resize local if resolution is available canvasContext.drawImage($this, 0, 0); if setTimeout(video.videoWidth > 0 && video.videoHeight > 0) {loop, 1000 / 30); // drawing at 30fps resizeLocalVideo({target: video}); } enableMuteToggles(true)(); }, 0); |

playback test video fragment on Canvas code

| Code Block | ||||

|---|---|---|---|---|

| ||||

if ($("#muteVideoToggle").is(":checked")) { muteVideo(); } mockVideoElement.play(); |

set up audio capturing from Canvas code

| Code Block | ||||

|---|---|---|---|---|

| ||||

if ($("#muteAudioToggle#sendAudio").is("':checked"')) {

mockVideoElement.muted = muteAudio()false;

try }{

//remove resize listener in casevar thisaudioContext video= was cached earlier

video.removeEventListener('resize', resizeLocalVideonew (window.AudioContext || window.webkitAudioContext)();

video.addEventListener('resize', resizeLocalVideo);

setStatus(STREAM_STATUS.PUBLISHING);

//play preview

var constraints =} catch (e) {

audio: $("#playAudio").is(':checked'),

video: $("#playVideo").is(':checked')console.warn("Failed to create audio context");

};

var if (constraints.video) {source = audioContext.createMediaElementSource(mockVideoElement);

var destination constraints.video = {

width: (!$("#receiveDefaultSize").is(":checked")) ? parseInt($('#receiveWidth').val()) : 0,= audioContext.createMediaStreamDestination();

height: (!$("#receiveDefaultSize").is(":checked")) ? parseInt($('#receiveHeight').val()) : 0,source.connect(destination);

bitrate: (!$("#receiveDefaultBitrate").is(":checked")) ? $("#receiveBitrate").val() : 0,

quality: (!$("#receiveDefaultQuality").is(":checked")) ? $('#quality').val() : 0

};

}

previewStream = canvasStream.addTrack(destination.stream.getAudioTracks()[0]);

} |

3. Publishing the stream.

stream.publish(); code

| Code Block | ||||

|---|---|---|---|---|

| ||||

session.createStream({ name: streamName, display: remoteVideolocalVideo, constraintscacheLocalResources: constraintstrue, constraints: constraints })... });on(STREAM_STATUS.PUBLISHING, function (stream) { previewStream.play();... }).on(STREAM_STATUS.UNPUBLISHED, function () { ... }).on(STREAM_STATUS.FAILED, function () { ... }); publishStream.publish(); |

64. Sending the audio-video stream via WebRTC7. Stopping publishing Receiving from the server an event confirming successful publishing of the stream.

stream.stop(); StreamStatusEvent, статус PUBLISHING code

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStream = session.createStream({ name: streamName, display: localVideo, cacheLocalResources: true, ... constraints: constraints, mediaConnectionConstraints: mediaConnectionConstraints }).on(STREAM_STATUS.PUBLISHING, function (publishStreamstream) { ...setStatus("#publishStatus", STREAM_STATUS.PUBLISHING); previewStream = session.createStream({playStream(); name: streamName, onPublishing(stream); display: remoteVideo, constraints: constraints }).on(STREAM_STATUS.PLAYINGUNPUBLISHED, function (previewStream) { ... }).on(STREAM_STATUS.STOPPEDFAILED, function () { ... publishStream}).stoppublish(); }).on(STREAM_STATUS.FAILED, function |

5. Sending the audio-video stream via WebRTC

6. Stopping publishing the stream.

stream.stop(); code

| Code Block | ||||

|---|---|---|---|---|

| ||||

function stopStreaming() { ... //preview failed, stop publishStream if (publishStream.status() == STREAM_STATUS.PUBLISHING) { setStatus(STREAM_STATUS.FAILED); if (publishStream != null && publishStream.published()) { publishStream.stop(); } }stopCanvasStream(); previewStream.play();} |

stopCanvasStream() code

| Code Block | ||||

|---|---|---|---|---|

| ||||

function stopCanvasStream() { }).on(STREAM_STATUS.UNPUBLISHED, function (if(mockVideoElement) { ... mockVideoElement.pause(); }) mockVideoElement.on(STREAM_STATUS.FAILED, function () {removeEventListener('play', null); ... mockVideoElement = })null; publishStream.publish(); |

...

}

} |

7. Receiving from the server an event confirming successful unpublishing of the stream.

StreamStatusEvent, статус UNPUBLISHED code

| Code Block | ||||

|---|---|---|---|---|

| ||||

publishStream = session.createStream({ name: streamName, display: localVideo, cacheLocalResources: true, constraints: constraints, ... mediaConnectionConstraints: mediaConnectionConstraints }).on(STREAM_STATUS.PUBLISHING, function (publishStreamstream) { ... }).on(STREAM_STATUS.UNPUBLISHED, function () { setStatus("#publishStatus", STREAM_STATUS.UNPUBLISHED); //enable start button onStoppeddisconnect(); }).on(STREAM_STATUS.FAILED, function () { ... }); publishStream.publish(); |

To developer

Capability to capture video stream from an HTML5 Canvas element is available in WebSDK WCS starting from this version of JavaScript API. The source code of the example is located in examples/demo/streaming/mediacanvas_devices_managerstreaming/.

You can use this capability to capture your own video stream rendered in the browser, for example:

...