...

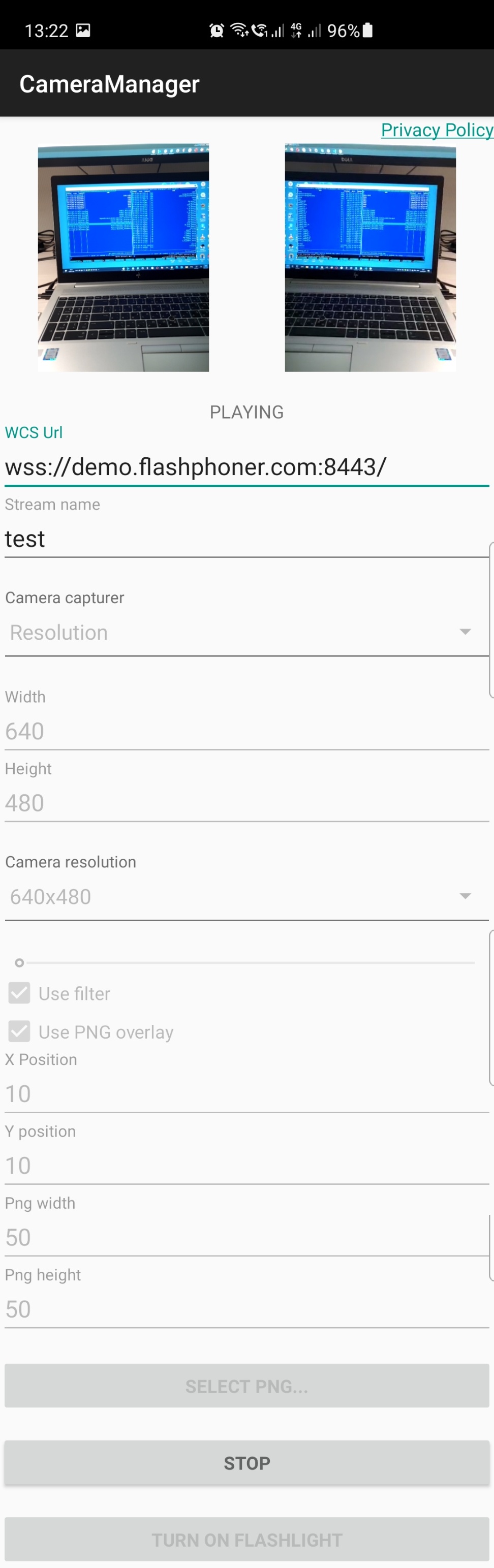

- Select PNG - button to select image from device gallery

- Use PNG overlay - apply PNG image to stream published

- X Position, Y position - top left corner coordinates to overlay image to, in pixels

- Png width - PNG picture width in frame, in pixels

- Png height - PNG picture height in frame, in pixels

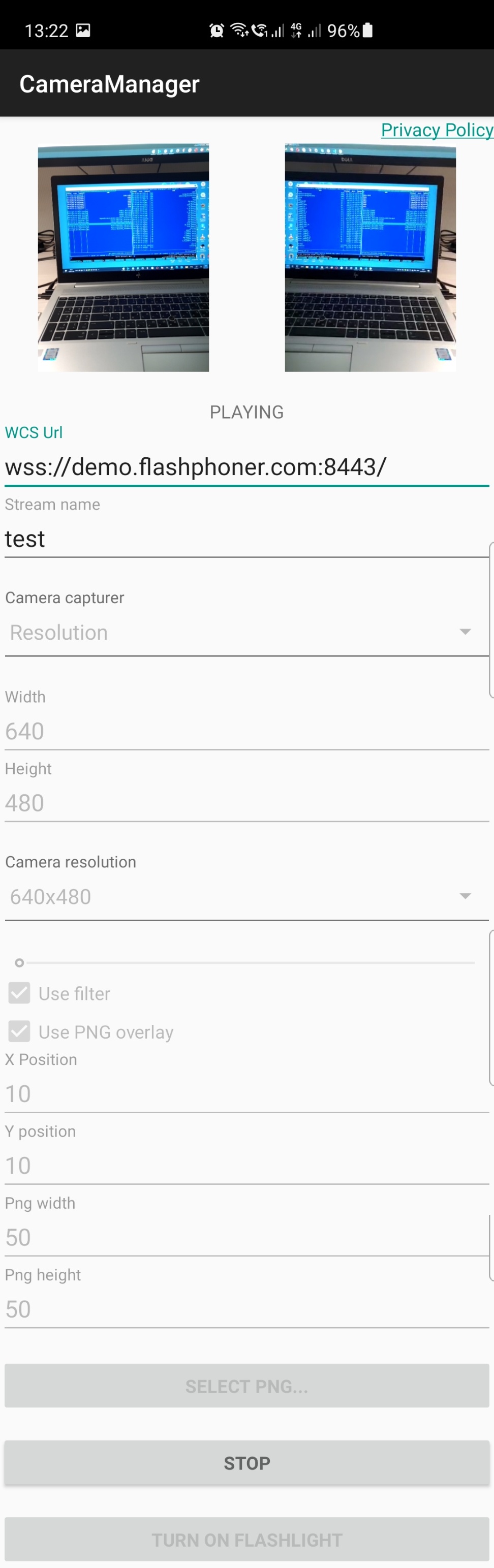

Resolution selection screenshot:

- Camera resolution - camera supported resolutions spinner

Image Added

Image Added

Analyzing example code

To analyze the code use the following classes of camera-manager example which is available to download in build build 1.1.0.4247:

- main application activity class CameraManagerActivity.java

- Camera1Capturer implementation class for Zoom example ZoomCameraCapturer.java

- Camera1Enumerator implementation class for Zoom example ZoomCameraEnumerator.java

- CameraSession implementation class for Zoom example ZoomCameraSession.java

- Camera1Capturer implementation class for GPUImage example GPUImageCameraCapturer.java

- Camera1Enumerator implementation class for GPUImage example GPUImageCameraEnumerator.java

- CameraSession implementation class for GPUImage example GPUImageCameraSession.java

- Camera1Capturer implementation class for PngOverlay example PngOverlayCameraCapturer.java

- Camera1Enumerator implementation class for PngOverlay example PngOverlayCameraEnumerator.java

- CameraSession implementation class for PngOverlay example PngOverlayCameraSession.java

- Camera1Capturer implementation class for Resolution example ResolutionCameraCapturer.java

- Camera1Enumerator implementation class for Resolution example ResolutionCameraEnumerator.java

- CameraSession implementation class for Resolution example ResolutionCameraSession.java

Note that interfaces implementation classes are placed to org.webrtc package, it is necessary to access camera capturing and management functions

1. API initializing.

Flashphoner.init() code

| Code Block |

|---|

|

Flashphoner.init(this); |

...

Flashphoner.createSession() code

The SessionOptions object with the following parameters is passing to the method

- URL of WCS server

- SurfaceViewRenderer localRenderer to use to display a stream publishing (with changes applyed)

- SurfaceViewRenderer remoteRenderer to use to display the stream playing

| Code Block |

|---|

|

sessionOptions = new SessionOptions(mWcsUrlView.getText().toString());

sessionOptions.setLocalRenderer(localRender);

sessionOptions.setRemoteRenderer(remoteRender);

/**

* Session for connection to WCS server is created with method createSession().

*/

session = Flashphoner.createSession(sessionOptions); |

3. Connection establishing.

Session.connect() code

| Code Block |

|---|

|

session.connect(new Connection()); |

4. Receiving the event confirming successful connection.

session.onConnected() code

| Code Block |

|---|

|

@Override

public void onConnected(final Connection connection) {

runOnUiThread(new Runnable() {

@Override

public void run() {

mStatusView.setText(connection.getStatus());

...

}

});

}); |

...

Flashphoner.getMediaDevices().getVideoList(), Flashphoner.getCameraEnumerator().isBackFacing() code

| Code Block |

|---|

|

int cameraId = 0;

List<MediaDevice> videoList = Flashphoner.getMediaDevices().getVideoList();

for (MediaDevice videoDevice : videoList) {

String videoDeviceName = videoDevice.getLabel();

if (Flashphoner.getCameraEnumerator().isBackFacing(videoDeviceName)) {

cameraId = videoDevice.getId();

break;

}

} |

...

StreamOptions.setConstraints(), Session.createStream() code

| Code Block |

|---|

|

StreamOptions streamOptions = new StreamOptions(streamName);

VideoConstraints videoConstraints = new VideoConstraints();

videoConstraints.setVideoFps(25);

videoConstraints.setCameraId(cameraId);

Constraints constraints = new Constraints(true, true);

constraints.setVideoConstraints(videoConstraints);

streamOptions.setConstraints(constraints);

/**

* Stream is created with method Session.createStream().

*/

publishStream = session.createStream(streamOptions); |

...

ActivityCompat.requestPermissions() code

| Code Block |

|---|

|

@Override

public void onConnected(final Connection connection) {

runOnUiThread(new Runnable() {

@Override

public void run() {

...

ActivityCompat.requestPermissions(StreamingMinActivity.this,

new String[]{Manifest.permission.RECORD_AUDIO, Manifest.permission.CAMERA},

PUBLISH_REQUEST_CODE);

...

}

...

});

}); |

8. Stream publishing after permissions are granted.

Stream.publish() code

| Code Block |

|---|

|

@Override

public void onRequestPermissionsResult(int requestCode,

@NonNull String permissions[], @NonNull int[] grantResults) {

switch (requestCode) {

case PUBLISH_REQUEST_CODE: {

if (grantResults.length == 0 ||

grantResults[0] != PackageManager.PERMISSION_GRANTED ||

grantResults[1] != PackageManager.PERMISSION_GRANTED) {

muteButton();

session.disconnect();

Log.i(TAG, "Permission has been denied by user");

} else {

/**

* Method Stream.publish() is called to publish stream.

*/

publishStream.publish();

Log.i(TAG, "Permission has been granted by user");

}

break;

}

...

}

} |

...

Session.createStream(), Stream.play() code

| Code Block |

|---|

|

publishStream.on(new StreamStatusEvent() {

@Override

public void onStreamStatus(final Stream stream, final StreamStatus streamStatus) {

runOnUiThread(new Runnable() {

@Override

public void run() {

if (StreamStatus.PUBLISHING.equals(streamStatus)) {

...

/**

* The options for the stream to play are set.

* The stream name is passed when StreamOptions object is created.

*/

StreamOptions streamOptions = new StreamOptions(streamName);

streamOptions.setConstraints(new Constraints(true, true));

/**

* Stream is created with method Session.createStream().

*/

playStream = session.createStream(streamOptions);

...

/**

* Method Stream.play() is called to start playback of the stream.

*/

playStream.play();

} else {

Log.e(TAG, "Can not publish stream " + stream.getName() + " " + streamStatus);

onStopped();

}

mStatusView.setText(streamStatus.toString());

}

});

}

}); |

10. Close connection.

Session.disconnect() code

| Code Block |

|---|

|

mStartButton.setOnClickListener(new OnClickListener() {

@Override

public void onClick(View view) {

muteButton();

if (mStartButton.getTag() == null || Integer.valueOf(R.string.action_start).equals(mStartButton.getTag())) {

...

} else {

/**

* Connection to WCS server is closed with method Session.disconnect().

*/

session.disconnect();

}

...

}

}); |

...

session.onDisconnection() code

| Code Block |

|---|

|

@Override

public void onDisconnection(final Connection connection) {

runOnUiThread(new Runnable() {

@Override

public void run() {

mStatusView.setText(connection.getStatus());

mStatusView.setText(connection.getStatus());

onStopped();

}

});

} |

12. Example choosing.

code

| Code Block |

|---|

|

mCameraCapturer.setOnItemChosenListener(new LabelledSpinner.OnItemChosenListener() {

@Override

public void onItemChosen(View labelledSpinner, AdapterView<?> adapterView, View itemView, int position, long id) {

String captureType = getResources().getStringArray(R.array.camera_capturer)[position];

switch (captureType) {

case "Flashlight":

changeFlashlightCamera();

break;

case "Zoom":

changeZoomCamera();

break;

case "GPUImage":

changeGpuImageCamera();

break;

case "PNG overlay":

changePngOverlayCamera();

break;

}

}

@Override

public void onNothingChosen(View labelledSpinner, AdapterView<?> adapterView) {

}

}); |

13. Camera type and custom camera options setting.

code

| Code Block |

|---|

|

private void changeFlashlightCamera() {

CameraCapturerFactory.getInstance().setCameraType(CameraCapturerFactory.CameraType.FLASHLIGHT_CAMERA);

...

}

private void changeZoomCamera() {

CameraCapturerFactory.getInstance().setCustomCameraCapturerOptions(zoomCameraCapturerOptions);

CameraCapturerFactory.getInstance().setCameraType(CameraCapturerFactory.CameraType.CUSTOM);

...

}

private void changePngOverlayCamera() {

CameraCapturerFactory.getInstance().setCustomCameraCapturerOptions(pngOverlayCameraCapturerOptions);

CameraCapturerFactory.getInstance().setCameraType(CameraCapturerFactory.CameraType.CUSTOM);

...

}

private void changeGpuImageCamera() {

CameraCapturerFactory.getInstance().setCustomCameraCapturerOptions(gpuImageCameraCapturerOptions);

CameraCapturerFactory.getInstance().setCameraType(CameraCapturerFactory.CameraType.CUSTOM);

...

} |

14. Custom camera options for Zoom example.

code

| Code Block |

|---|

|

private CustomCameraCapturerOptions zoomCameraCapturerOptions = new CustomCameraCapturerOptions() {

private String cameraName;

private CameraVideoCapturer.CameraEventsHandler eventsHandler;

private boolean captureToTexture;

@Override

public Class<?>[] getCameraConstructorArgsTypes() {

return new Class<?>[]{String.class, CameraVideoCapturer.CameraEventsHandler.class, boolean.class};

}

@Override

public Object[] getCameraConstructorArgs() {

return new Object[]{cameraName, eventsHandler, captureToTexture};

}

@Override

public void setCameraName(String cameraName) {

this.cameraName = cameraName;

}

@Override

public void setEventsHandler(CameraVideoCapturer.CameraEventsHandler eventsHandler) {

this.eventsHandler = eventsHandler;

}

@Override

public void setCaptureToTexture(boolean captureToTexture) {

this.captureToTexture = captureToTexture;

}

@Override

public String getCameraClassName() {

return "org.webrtc.ZoomCameraCapturer";

}

@Override

public Class<?>[] getEnumeratorConstructorArgsTypes() {

return new Class[0];

}

@Override

public Object[] getEnumeratorConstructorArgs() {

return new Object[0];

}

@Override

public String getEnumeratorClassName() {

return "org.webrtc.ZoomCameraEnumerator";

}

}; |

15. Custom camera options for PngOverlay example.

code

| Code Block |

|---|

|

private CustomCameraCapturerOptions pngOverlayCameraCapturerOptions = new CustomCameraCapturerOptions() {

private String cameraName;

private CameraVideoCapturer.CameraEventsHandler eventsHandler;

private boolean captureToTexture;

@Override

public Class<?>[] getCameraConstructorArgsTypes() {

return new Class<?>[]{String.class, CameraVideoCapturer.CameraEventsHandler.class, boolean.class};

}

@Override

public Object[] getCameraConstructorArgs() {

return new Object[]{cameraName, eventsHandler, captureToTexture};

}

@Override

public void setCameraName(String cameraName) {

this.cameraName = cameraName;

}

@Override

public void setEventsHandler(CameraVideoCapturer.CameraEventsHandler eventsHandler) {

this.eventsHandler = eventsHandler;

}

@Override

public void setCaptureToTexture(boolean captureToTexture) {

this.captureToTexture = captureToTexture;

}

@Override

public String getCameraClassName() {

return "org.webrtc.PngOverlayCameraCapturer";

}

@Override

public Class<?>[] getEnumeratorConstructorArgsTypes() {

return new Class[0];

}

@Override

public Object[] getEnumeratorConstructorArgs() {

return new Object[0];

}

@Override

public String getEnumeratorClassName() {

return "org.webrtc.PngOverlayCameraEnumerator";

}

}; |

16. Custom camera options for GPUImage example.

code

| Code Block |

|---|

|

private CustomCameraCapturerOptions gpuImageCameraCapturerOptions = new CustomCameraCapturerOptions() {

private String cameraName;

private CameraVideoCapturer.CameraEventsHandler eventsHandler;

private boolean captureToTexture;

@Override

public Class<?>[] getCameraConstructorArgsTypes() {

return new Class<?>[]{String.class, CameraVideoCapturer.CameraEventsHandler.class, boolean.class};

}

@Override

public Object[] getCameraConstructorArgs() {

return new Object[]{cameraName, eventsHandler, captureToTexture};

}

@Override

public void setCameraName(String cameraName) {

this.cameraName = cameraName;

}

@Override

public void setEventsHandler(CameraVideoCapturer.CameraEventsHandler eventsHandler) {

this.eventsHandler = eventsHandler;

}

@Override

public void setCaptureToTexture(boolean captureToTexture) {

this.captureToTexture = captureToTexture;

}

@Override

public String getCameraClassName() {

return "org.webrtc.GPUImageCameraCapturer";

}

@Override

public Class<?>[] getEnumeratorConstructorArgsTypes() {

return new Class[0];

}

@Override

public Object[] getEnumeratorConstructorArgs() {

return new Object[0];

}

@Override

public String getEnumeratorClassName() {

return "org.webrtc.GPUImageCameraEnumerator";

}

}; |

17. Turning on flashlightCustom camera options for Resolution example.

Flashphoner.turnOnFlashlight() code

| Code Block |

|---|

|

private void turnOnFlashlight CustomCameraCapturerOptions resolutionCameraCapturerOptions = new CustomCameraCapturerOptions() {

if (Flashphoner.turnOnFlashlight()) {private String cameraName;

private CameraVideoCapturer.CameraEventsHandler mSwitchFlashlightButton.setText(getResources().getString(R.string.turn_off_flashlight));

eventsHandler;

private boolean captureToTexture;

@Override

flashlight = true;

public Class<?>[] getCameraConstructorArgsTypes() {

}

} |

18. Turning off flashlight

Flashphoner.turnOffFlashlight() code

| Code Block |

|---|

|

private voidreturn turnOffFlashlight() {new Class<?>[]{String.class, CameraVideoCapturer.CameraEventsHandler.class, boolean.class};

Flashphoner.turnOffFlashlight();}

mSwitchFlashlightButton.setText(getResources().getString(R.string.turn_on_flashlight));

@Override

public flashlight = false;

Object[] getCameraConstructorArgs() {

} |

19. Zoom in/out management with slider.

ZoomCameraCapturer.setZoom() code

| Code Block |

|---|

|

return mZoomSeekBar.setOnSeekBarChangeListener(new SeekBar.OnSeekBarChangeListener() {new Object[]{cameraName, eventsHandler, captureToTexture};

}

@Override

@Override

public void onProgressChangedsetCameraName(SeekBarString seekBar, int progress, boolean fromUser) {

cameraName) {

this.cameraName = cameraName;

CameraVideoCapturer cameraVideoCapturer = CameraCapturerFactory.getInstance().getCameraVideoCapturer(); }

@Override

public ifvoid setEventsHandler(cameraVideoCapturer instanceof ZoomCameraCapturerCameraVideoCapturer.CameraEventsHandler eventsHandler) {

this.eventsHandler = eventsHandler;

((ZoomCameraCapturer) cameraVideoCapturer).setZoom(progress);

}

@Override

public void setCaptureToTexture(boolean captureToTexture) }{

}

this.captureToTexture = captureToTexture;

}

... @Override

public }); |

20. Overlaying a pucture to a stream with permission request.

PngOverlayCameraCapturer.setPicture() code

| Code Block |

|---|

|

@OverrideString getCameraClassName() {

protected void onActivityResult(int requestCode, int resultCode, @Nullable Intent data) {return "org.webrtc.ResolutionCameraCapturer";

super.onActivityResult(requestCode, resultCode, data);

}

@Override

if (requestCode == REQUEST_IMAGE_CAPTURE && resultCode == RESULT_OKpublic Class<?>[] getEnumeratorConstructorArgsTypes() {

InputStreamreturn inputStream = nullnew Class[0];

try {}

@Override

inputStreampublic = CameraManagerActivity.this.getBaseContext().getContentResolver().openInputStream(data.getData());

Object[] getEnumeratorConstructorArgs() {

} catch (FileNotFoundException e) {

return new Object[0];

}

@Override

Log.e(TAG, "Can't select picture: "public +String e.getMessagegetEnumeratorClassName()); {

}return "org.webrtc.ResolutionCameraEnumerator";

}

picture = BitmapFactory.decodeStream(inputStream);

}; |

18. Turning on flashlight.

Flashphoner.turnOnFlashlight() code

| Code Block |

|---|

|

private void }

turnOnFlashlight() {

CameraVideoCapturer cameraVideoCapturer = CameraCapturerFactory.getInstance().getCameraVideoCapturer();

if (Flashphoner.turnOnFlashlight()) {

if (cameraVideoCapturer instanceof PngOverlayCameraCapturer && picture != null) {

mSwitchFlashlightButton.setText(getResources().getString(R.string.turn_off_flashlight));

flashlight ((PngOverlayCameraCapturer) cameraVideoCapturer).setPicture(picture)= true;

}

} |

21. Camera session creation in ZoomCameraCapturer class

CameraSession.create19. Turning off flashlight

Flashphoner.turnOffFlashlight() code

| Code Block |

|---|

|

@Override

protected private void createCameraSessionturnOffFlashlight(CameraSession.CreateSessionCallback createSessionCallback, CameraSession.Events events, Context applicationContext, SurfaceTextureHelper surfaceTextureHelper, String cameraName, int width, int height, int framerate) {) {

Flashphoner.turnOffFlashlight();

CameraSession.CreateSessionCallback myCallback = new CameraSession.CreateSessionCallback() {mSwitchFlashlightButton.setText(getResources().getString(R.string.turn_on_flashlight));

flashlight = @Overridefalse;

} |

20. Zoom in/out management with slider.

ZoomCameraCapturer.setZoom() code

| Code Block |

|---|

|

public void onDonemZoomSeekBar.setOnSeekBarChangeListener(CameraSession cameraSessionnew SeekBar.OnSeekBarChangeListener() {

@Override

ZoomCameraCapturer.this.cameraSession = (ZoomCameraSession) cameraSession;

public void onProgressChanged(SeekBar seekBar, int progress, boolean fromUser) {

createSessionCallback.onDone(cameraSessionCameraVideoCapturer cameraVideoCapturer = CameraCapturerFactory.getInstance().getCameraVideoCapturer();

}

if (cameraVideoCapturer instanceof ZoomCameraCapturer) {

@Override

public void onFailure(CameraSession.FailureType failureType, String s) {((ZoomCameraCapturer) cameraVideoCapturer).setZoom(progress);

createSessionCallback.onFailure(failureType, s);}

}

};

ZoomCameraSession.create(myCallback, events, captureToTexture, applicationContext, surfaceTextureHelper, Camera1Enumerator.getCameraIndex(cameraName), width, height, framerate);

} |

22. Zoom in/out value setting in ZoomCameraCapturer class

...

21. Overlaying a pucture to a stream with permission request.

PngOverlayCameraCapturer.setPicture() code

| Code Block |

|---|

|

public@Override

boolean setZoom(int value) protected void onActivityResult(int requestCode, int resultCode, @Nullable Intent data) {

return cameraSessionsuper.setZoom(valueonActivityResult(requestCode, resultCode, data);

} |

23. Byte buffer allocation for camera images data in ZoomCameraSession class

code

| Code Block |

|---|

|

if (requestCode == REQUEST_IMAGE_CAPTURE && resultCode if (!captureToTexture== RESULT_OK) {

InputStream inputStream = null;

int frameSize = captureFormat.frameSize();

try {

//The implementation is taken from the WebRTC library, so the purpose of the three buffers is not entirely known inputStream = CameraManagerActivity.this.getBaseContext().getContentResolver().openInputStream(data.getData());

} catch (FileNotFoundException e) {

for(int i = 0; i < 3; ++i) {

Log.e(TAG, "Can't select picture: " + e.getMessage());

}

ByteBuffer bufferpicture = ByteBufferBitmapFactory.allocateDirectdecodeStream(frameSizeinputStream);

}

CameraVideoCapturer cameraVideoCapturer = cameraCameraCapturerFactory.addCallbackBuffergetInstance(buffer).arraygetCameraVideoCapturer());

if (cameraVideoCapturer instanceof PngOverlayCameraCapturer && picture != }null) {

((PngOverlayCameraCapturer) cameraVideoCapturer).setPicture(picture);

}

} |

24. Реализация изменение масштаба в классе ZoomCameraSession22. Choosing camera resolution

code

| Code Block |

|---|

|

mCameraResolutionSpinner = public boolean setZoom(int value(LabelledSpinner) findViewById(R.id.camera_resolution_spinner);

mCameraResolutionSpinner.setOnItemChosenListener(new LabelledSpinner.OnItemChosenListener() {

@Override

if (!isCameraActive() && camera.getParameters().isZoomSupported()) {

public void onItemChosen(View labelledSpinner, AdapterView<?> adapterView, View itemView, int return false;

position, long id) {

}

Camera.Parameters parametersString resolution = camera.getParametersadapterView.getSelectedItem().toString();

if parameters(resolution.setZoomisEmpty(value)); {

camera.setParameters(parameters) return;

return true; }

} |

25. Setting to apply filter in GPUImageCameraSession class

code

| Code Block |

|---|

|

public void setUsedFilter(boolean usedFilter) {

setResolutions(resolution);

}

isUsedFilter = usedFilter;@Override

} |

26. Filter applying to image data from camera buffer

code

| Code Block |

|---|

|

privatepublic void listenForBytebufferFrames(onNothingChosen(View labelledSpinner, AdapterView<?> adapterView) {

this.camera.setPreviewCallbackWithBuffer(new Camera.PreviewCallback() {

}

});

public void onPreviewFrame(byte[] data, Camera callbackCamera) {

...

private void setResolutions(String resolutionStr) {

String[] resolution = GPUImageCameraSession.this.checkIsOnCameraThread(resolutionStr.split("x");

mWidth.setText(resolution[0]);

if (callbackCamera != GPUImageCameraSession.this.camera) {

mHeight.setText(resolution[1]);

}

|

23. Camera session creation in ZoomCameraCapturer class

CameraSession.create() code

| Code Block |

|---|

|

@Override

protected void createCameraSession(CameraSession.CreateSessionCallback createSessionCallback, CameraSession.Events events, Context applicationContext, SurfaceTextureHelper surfaceTextureHelper, String cameraName, int Logging.e(TAG, CALLBACK_FROM_A_DIFFERENT_CAMERA_THIS_SHOULD_NEVER_HAPPEN);

width, int height, int framerate) {

CameraSession.CreateSessionCallback myCallback = } else if (GPUImageCameraSession.this.state != GPUImageCameraSession.SessionState.RUNNINGnew CameraSession.CreateSessionCallback() {

@Override

Logging.d(TAG, BYTEBUFFER_FRAME_CAPTURED_BUT_CAMERA_IS_NO_LONGER_RUNNING);

public void onDone(CameraSession cameraSession) {

} else {

ZoomCameraCapturer.this.cameraSession = (ZoomCameraSession) cameraSession;

...

createSessionCallback.onDone(cameraSession);

applyFilter(data, GPUImageCameraSession.this.captureFormat.width, GPUImageCameraSession.this.captureFormat.height);

}

@Override

VideoFrame.Buffer frameBuffer =public newvoid NV21Buffer(data, GPUImageCameraSession.this.captureFormat.width, GPUImageCameraSession.this.captureFormat.height, () -> onFailure(CameraSession.FailureType failureType, String s) {

createSessionCallback.onFailure(failureType, s);

}

};

ZoomCameraSession.create(myCallback, events, captureToTexture, applicationContext, surfaceTextureHelper, Camera1Enumerator.getCameraIndex(cameraName), width, height, framerate);

} |

24. Zoom in/out value setting in ZoomCameraCapturer class

CameraSession.setZoom() code

| Code Block |

|---|

|

public boolean setZoom(int value) {

return cameraSession.setZoom(value);

} |

25. Byte buffer allocation for camera images data in ZoomCameraSession class

code

| Code Block |

|---|

|

if (!captureToTexture) {

int frameSize = captureFormat.frameSize();

//The implementation is taken from the WebRTC library, so the purpose of the three buffers is not entirely known

for(int i = 0; i < 3; ++i) {

ByteBuffer buffer = ByteBuffer.allocateDirect(frameSize);

camera.addCallbackBuffer(buffer.array());

}

} |

26. Реализация изменение масштаба в классе ZoomCameraSession

code

| Code Block |

|---|

|

public boolean setZoom(int value) {

if (!isCameraActive() && camera.getParameters().isZoomSupported()) {

return false;

}

Camera.Parameters parameters = camera.getParameters();

parameters.setZoom(value);

camera.setParameters(parameters);

return true;

} |

27. Setting to apply filter in GPUImageCameraSession class

code

| Code Block |

|---|

|

public void setUsedFilter(boolean usedFilter) {

isUsedFilter = usedFilter;

} |

28. Filter applying to image data from camera buffer

code

| Code Block |

|---|

|

private void listenForBytebufferFrames() {

this.camera.setPreviewCallbackWithBuffer(new Camera.PreviewCallback() {

public void onPreviewFrame(byte[] data, Camera callbackCamera) {

GPUImageCameraSession.this.checkIsOnCameraThread();

if (callbackCamera != GPUImageCameraSession.this.camera) {

Logging.e(TAG, CALLBACK_FROM_A_DIFFERENT_CAMERA_THIS_SHOULD_NEVER_HAPPEN);

} else if (GPUImageCameraSession.this.state != GPUImageCameraSession.SessionState.RUNNING) {

Logging.d(TAG, BYTEBUFFER_FRAME_CAPTURED_BUT_CAMERA_IS_NO_LONGER_RUNNING);

} else {

...

applyFilter(data, GPUImageCameraSession.this.captureFormat.width, GPUImageCameraSession.this.captureFormat.height);

VideoFrame.Buffer frameBuffer = new NV21Buffer(data, GPUImageCameraSession.this.captureFormat.width, GPUImageCameraSession.this.captureFormat.height, () -> {

GPUImageCameraSession.this.cameraThreadHandler.post(() -> {

if (GPUImageCameraSession.this.state == GPUImageCameraSession.SessionState.RUNNING) {

GPUImageCameraSession.this.camera.addCallbackBuffer(data);

}

});

});

VideoFrame frame = new VideoFrame(frameBuffer, GPUImageCameraSession.this.getFrameOrientation(), captureTimeNs);

GPUImageCameraSession.this.events.onFrameCaptured(GPUImageCameraSession.this, frame);

frame.release();

}

}

});

} |

27. 'Sepia' filter 29. Filter implementation

code

| Code Block |

|---|

|

private void applyFilter(byte[] data, int width, int height) {

if (!isUsedFilter) {

return;

}

GPUImageMonochromeFilter filter = new GPUImageMonochromeFilter();

filter.setColor(0,0,0);

GPUImageRenderer renderer = new GPUImageRenderer(filter);

renderer.setRotation(Rotation.NORMAL, false, false);

renderer.setScaleType(GPUImage.ScaleType.CENTER_INSIDE);

PixelBuffer buffer = new PixelBuffer(width, height);

buffer.setRenderer(renderer);

renderer.onPreviewFrame(data, width, height);

Bitmap newBitmapRgb = buffer.getBitmap();

byte[] dataYuv = Utils.getNV21(width, height, newBitmapRgb);

System.arraycopy(dataYuv, 0, data, 0, dataYuv.length);

filter.destroy();

buffer.destroy();

} |

2830. Setting picture bitmap to overlay in in PngOverlayCameraCapturer class

code

| Code Block |

|---|

|

public void setPicture(Bitmap picture) {

if (cameraSession != null) {

cameraSession.setPicture(picture);

}

} |

2931. Picture data overlaying to camera image data

code

| Code Block |

|---|

|

private void listenForBytebufferFrames() {

this.camera.setPreviewCallbackWithBuffer(new Camera.PreviewCallback() {

public void onPreviewFrame(byte[] data, Camera callbackCamera) {

PngOverlayCameraSession.this.checkIsOnCameraThread();

if (callbackCamera != PngOverlayCameraSession.this.camera) {

Logging.e(TAG, CALLBACK_FROM_A_DIFFERENT_CAMERA_THIS_SHOULD_NEVER_HAPPEN);

} else if (PngOverlayCameraSession.this.state != PngOverlayCameraSession.SessionState.RUNNING) {

Logging.d(TAG, BYTEBUFFER_FRAME_CAPTURED_BUT_CAMERA_IS_NO_LONGER_RUNNING);

} else {

...

insertPicture(data, PngOverlayCameraSession.this.captureFormat.width, PngOverlayCameraSession.this.captureFormat.height);

VideoFrame.Buffer frameBuffer = new NV21Buffer(data, PngOverlayCameraSession.this.captureFormat.width, PngOverlayCameraSession.this.captureFormat.height, () -> {

{

PngOverlayCameraSession.this.cameraThreadHandler.post(() -> {

if (PngOverlayCameraSession.this.state == PngOverlayCameraSession.SessionState.RUNNING) {

PngOverlayCameraSession.this.cameraThreadHandlercamera.postaddCallbackBuffer((data);

-> {

}

if (PngOverlayCameraSession.this.state == PngOverlayCameraSession.SessionState.RUNNING) {

});

PngOverlayCameraSession.this.camera.addCallbackBuffer(data);

});

VideoFrame frame = }

new VideoFrame(frameBuffer, PngOverlayCameraSession.this.getFrameOrientation(), captureTimeNs);

}PngOverlayCameraSession.this.events.onFrameCaptured(PngOverlayCameraSession.this, frame);

}frame.release();

}

}

VideoFrame frame = new VideoFrame(frameBuffer, PngOverlayCameraSession.this.getFrameOrientation(), captureTimeNs });

} |

32. Picture overlaying implementation

code

| Code Block |

|---|

|

private void insertPicture(byte[] data, int width, int height) {

PngOverlayCameraSession.this.events.onFrameCaptured(PngOverlayCameraSession.this, frame);

if (picture == null || !isUsedPngOverlay) {

frame.release()return;

}

}

Bitmap scaledPicture = rescalingPicture();

}

int [] pngArray = new int[scaledPicture.getHeight() * }scaledPicture.getWidth()];

} |

30. Picture overlaying implementation

code

| Code Block |

|---|

|

private void insertPicture(byte[] data, int width, int height) {

scaledPicture.getPixels(pngArray, 0, scaledPicture.getWidth(), 0, 0, scaledPicture.getWidth(), scaledPicture.getHeight());

int if[] (picturergbData == new nullint || !isUsedPngOverlay) {

[width * height];

GPUImageNativeLibrary.YUVtoARBG(data, width, height, returnrgbData);

int }

pictureW = scaledPicture.getWidth();

Bitmapint scaledPicturepictureH = rescalingPicturescaledPicture.getHeight();

for (int []c pngArray = new int[scaledPicture.getHeight() * scaledPicture.getWidth()];

0; c < pngArray.length; c++) {

scaledPicture.getPixels(pngArray, 0, scaledPicture.getWidth(), 0, 0, scaledPicture.getWidth(), scaledPicture.getHeight());

int pictureColumn = c / pictureW;

int [] rgbDatapictureLine = newc int- [widthpictureColumn * height]pictureW;

GPUImageNativeLibrary.YUVtoARBG(data, width, height, rgbData);

int pictureWindex = scaledPicture.getWidth();

int pictureH = scaledPicture.getHeight()(pictureLine * width) + pictureColumn + startX * width + startY;

for (int c = 0;if c(index <>= pngArraydata.length; c++) {

int pictureColumn = c / pictureW; break;

}

int pictureLinergbData[index] = pngArray[c];

- pictureColumn * pictureW;

}

intbyte[] indexyuvData = Utils.getNV21(pictureLinewidth, *height, widthrgbData);

+ pictureColumn + startX * width + startY;

System.arraycopy(yuvData, 0, data, 0, yuvData.length);

} |

33. Getting resolutions supported list

ResolutionCameraCapturer.getSupportedResolutions code

| Code Block |

|---|

|

if (index >= data.lengthpublic List<Camera.Size> getSupportedResolutions() {

Camera camera = Camera.open(Camera1Enumerator.getCameraIndex(cameraName));

List ret = breakCollections.EMPTY_LIST;

if (camera != null) }{

rgbData[index]ret = pngArray[c]camera.getParameters().getSupportedVideoSizes();

}

camera.release();

byte[] yuvData = Utils.getNV21(width, height, rgbData); }

System.arraycopy(yuvData, 0, data, 0, yuvData.length)return ret;

} |