WCS allows mixing streams of active broadcasts. The output stream of the mixer can be recorded, played or republished using any of technologies supported by WCS.

Mixing is controlled using settings and REST API.

Stream transcoding is applied while mixing streams. The recommended server configuration is 2 CPU cores per 1 mixer. |

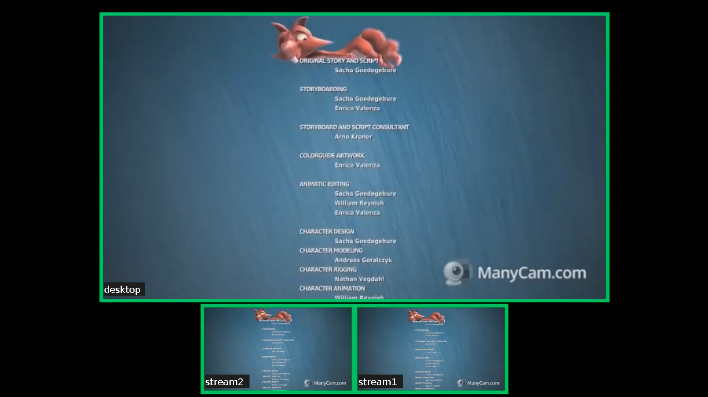

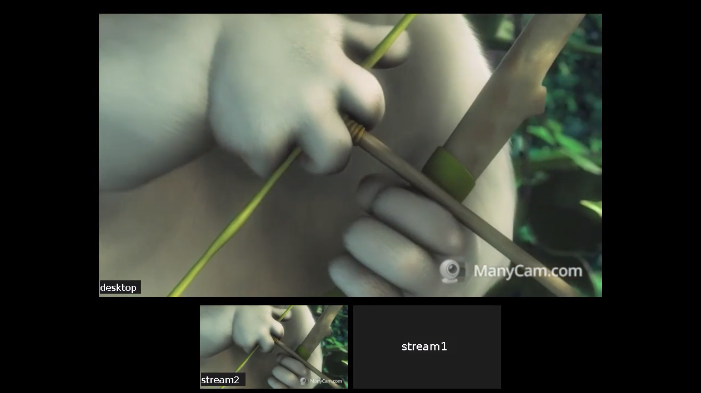

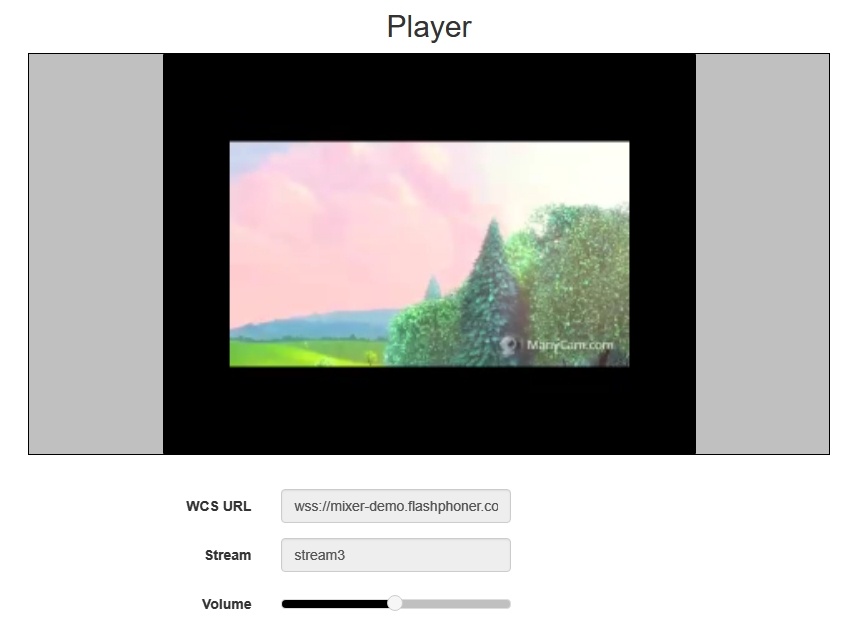

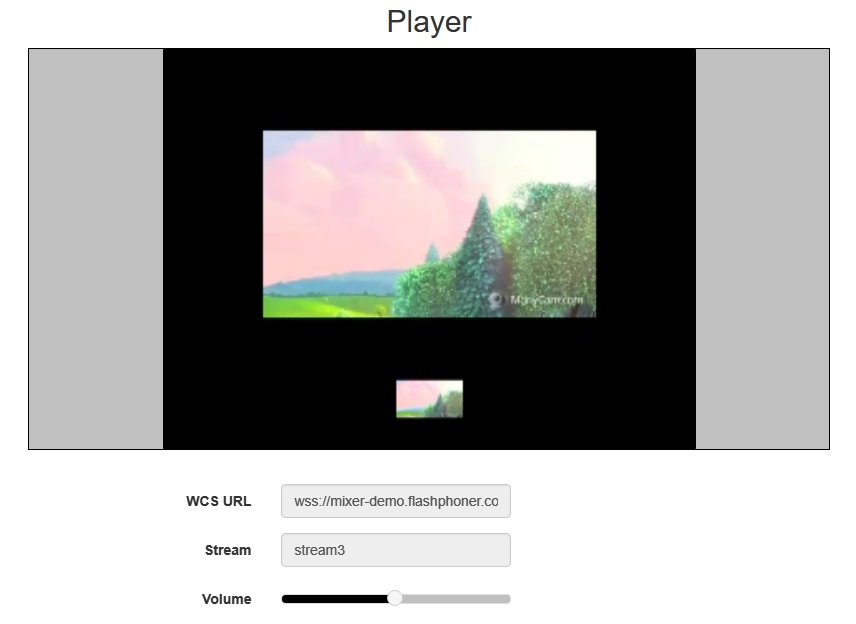

The mixer allows custom placing of video streams in the output frame. The stream with a certain name (by default desktop) is seen as screensharing and hence is placed in the center of the frame:

If the name of the published RTMP stream has the '#' symbol, the server treats everything after that symbols as the name of the mixer that will be created when the stream is published. For instance, for the user1#room1 stream, the room1 mixer is created, and the stream is added to this mixer then. The stream name can also include the screen sharing keyword, for example, user1#room1#desktop

A REST-query must be an HTTP/HTTPS POST query in the following form:

Here:

REST-method | Example of REST query | Example of response | Response statuses | Description | |

|---|---|---|---|---|---|

/mixer/startup |

| 200 - OK 400 - Bad request 409 - Conflict 500 - Internal error | Creates a mixer the provided stream is published for | ||

/mixer/add |

| 200 - OK 404 - Mixer not found 404 - Stream not found 500 - Internal error | Add the RTMP stream to the mixer | ||

/mixer/remove |

| 200 - OK 404 - Mixer not found 404 - Stream not found 500 - Internal error | Remove the RTMP stream from the mixer | ||

/mixer/find_all |

| 200 - OK 404 - Not found 500 - Internal error | Find all mixers | ||

/mixer/terminate |

| 200 - OK 404 - Not found 500 - Internal error | Terminate operation of the mixer | ||

/stream/startRecording |

| 200 - OK 404 - Not found 500 - Internal error | Start recording of the stream in the given media session | ||

/stream/stopRecording |

| 200 - OK 404 - Not found 500 - Internal error | Stop recording the stream in the given media session | ||

/mixer/setAudioVideo |

| 200 - OK 400 - Bad request 404 - Not found 500 - Internal error | Mute/unmute video or change audio level for mexer incoming stream |

Parameter name | Description | Example |

|---|---|---|

uri | Unique identifier of the mixer | |

localStreamName | Name of the output stream of the mixer | stream3 |

| hasVideo | Mix video | true |

| hasAudio | Mix audio | true |

remoteStreamName | Name of the stream added to the mixer | stream1 |

mediaSessionId | Media session identifier | ce92b134-2468-4460-8d06-1ea3c5aabace |

status | Stream status | PROCESSED_LOCAL |

| background | Mixer background | background.png |

| watermark | Mixer watermark | watermark.png |

| mixerLayoutClass | Mixer layout | com.flashphoner.mixerlayout.TestLayout |

| streams | Streams list or regular expression for search | ^stream.* ["stream1", "stream2"] |

| audioLevel | Incoming stream audio level | 0 |

| videoMuted | Mute video | true |

Since build 5.2.872 it is possible to pass most of mixer parameters corresponding to flashphoner.properties mixer settings while creating the mixer using /mixer/startup REST query. In this case, parameters will be applied to the created mixer instance only. For example, the following query

{

"uri": "mixer://mixer1",

"localStreamName": "stream3",

"mixerVideoWidth": 640,

"mixerVideoHeight": 360,

"mixerVideoFps": 24,

"mixerVideoBitrateKbps": 500

} |

will create the mixer with output stream resolution 640x360, fps 24 and bitrate 500 kbps, no matter what settings are set in flashphoner.properties file

The mixer parameters fukll list can be retrieved using /mixer/find_all REST query, for example

[

{

"localMediaSessionId": "7c9e5353-8680-4ad2-8a47-1a366091785c",

"localStreamName": "m1",

"uri": "mixer://m1",

"status": "PROCESSED_LOCAL",

"hasAudio": true,

"hasVideo": true,

"record": false,

"mediaSessions": [],

"mixerLayoutClass": "com.flashphoner.media.mixer.video.presentation.GridLayout",

"mixerActivityTimerCoolOffPeriod": 1,

"mixerActivityTimerTimeout": -1,

"mixerAppName": "defaultApp",

"mixerAudioOpusFloatCoding": false,

"mixerAudioSilenceThreshold": -50,

"mixerAudioThreads": 4,

"mixerAutoScaleDesktop": true,

"mixerDebugMode": false,

"mixerDesktopAlign": "TOP",

"mixerDisplayStreamName": false,

"mixerFontSize": 20,

"mixerFontSizeAudioOnly": 40,

"mixerIdleTimeout": 20000,

"mixerInBufferingMs": 200,

"mixerIncomingTimeRateLowerThreshold": 0.95,

"mixerIncomingTimeRateUpperThreshold": 1.05,

"mixerMcuAudio": false,

"mixerMcuVideo": false,

"mixerMcuMultithreadedMix": false,

"mixerMinimalFontSize": 1,

"mixerMcuMultithreadedDelivery": false,

"mixerOutBufferEnabled": false,

"mixerOutBufferInitialSize": 2000,

"mixerOutBufferStartSize": 150,

"mixerOutBufferPollingTime": 100,

"mixerOutBufferMaxBufferingsAllowed": -1,

"mixerShowSeparateAudioFrame": true,

"mixerTextAutoscale": true,

"mixerTextColour": "0xFFFFFF",

"mixerTextBulkWriteWithBuffer": true,

"mixerTextBulkWrite": true,

"mixerTextBackgroundOpacity": 100,

"mixerTextBackgroundColour": "0x2B2A2B",

"mixerTextPaddingLeft": 5,

"mixerVoiceActivitySwitchDelay": 0,

"mixerVoiceActivityFrameThickness": 6,

"mixerVoiceActivityFramePositionInner": false,

"mixerVoiceActivityColour": "0x00CC66",

"mixerVoiceActivity": true,

"mixerVideoWidth": 1280,

"mixerVideoThreads": 4,

"mixerVideoStableFpsThreshold": 15,

"mixerVideoQuality": 24,

"mixerVideoProfileLevel": "42c02a",

"mixerVideoLayoutDesktopKeyWord": "desktop",

"mixerVideoHeight": 720,

"mixerVideoGridLayoutPadding": 30,

"mixerVideoGridLayoutMiddlePadding": 10,

"mixerVideoFps": 30,

"mixerVideoDesktopLayoutPadding": 30,

"mixerVideoDesktopLayoutInlinePadding": 10,

"mixerVideoBufferLength": 1000,

"mixerVideoBitrateKbps": 2000,

"mixerUseSdpState": true,

"mixerType": "NATIVE",

"mixerThreadTimeoutMs": 33,

"mixerTextPaddingTop": 5,

"mixerTextPaddingRight": 4,

"mixerTextFont": "Serif",

"mixerTextPaddingBottom": 5,

"mixerTextDisplayRoom": true,

"mixerTextCutTop": 3,

"mixerRealtime": true,

"mixerPruneStreams": false,

"audioMixerOutputCodec": "alaw",

"audioMixerOutputSampleRate": 48000,

"audioMixerMaxDelay": 300,

"mixerAudioOnlyHeight": 360,

"mixerAudioOnlyWidth": 640

}

] |

To send the REST query to the WCS server use a REST-client.

Mixer output stream name must be defined while creating mixer by REST API (localStreamName parameter). Otherwise, server returns 400 Bad request with message "No localStreamName given".

Mixing can be configured using the following parameters in the flashphoner.properties settings file

Parameter | Default value | Description |

|---|---|---|

mixer_video_desktop_layout_inline_padding | 10 | Distance (padding) between windows of video streams in the lower line (below the screen sharing window) |

mixer_video_desktop_layout_padding | 30 | Distance (padding) between the screen sharing window and the lower line (the rest streams) |

mixer_video_enabled | true | Enables (by default) or disables video mixing |

mixer_video_grid_layout_middle_padding | 10 | Distance between windows of video streams in one line (without screen sharing window) |

mixer_video_grid_layout_padding | 30 | Distance between lines of windows (without screen sharing window) |

mixer_video_height | 720 | The image height of the mixer output stream, should have an even value. An uneven value will be auto decremented by one: e.g., if set to 481, mixer height will be 480. |

mixer_video_layout_desktop_key_word | desktop | Keyword for the screen sharing stream |

mixer_video_width | 1280 | The image width of the mixer output stream, should have an even value. An uneven value will be auto decremented by one: e.g., if set to 641, mixer width will be 640. |

record_mixer_streams | false | Turns on or off (default) recording of all mixer output streams |

Automatic creation of mixers for streams with the '#' symbol in their name requires the application that handles input streams to register the handler: 'com.flashphoner.server.client.handler.wcs4.FlashRoomRecordingStreamingHandler'. Registering the handler can be done using the command line interface. For instance, for the flashStreamingApp application used to publish incoming RTMP streams this can be done with the following command:

update app -m com.flashphoner.server.client.handler.wcs4.FlashRoomRecordingStreamingHandler -c com.flashphoner.server.client.handler.wcs4.FlashStreamingCallbackHandler flashStreamingApp |

You can read more about managing applications using the command line of the WCS server here.

By default, both video and audio streams are mixed. If audio only mixing is necessary, it should be set on mixer creation

{

"uri": "mixer://mixer1",

"localStreamName": "stream3",

"hasVideo": "false"

} |

To switch off video mixing for all streams, this parameter should be set in flashphoner.properties file

mixer_video_enabled=false |

In this case video mixing can be switched on for certain mixer on its creation.

Since build 5.2.689 audio mixing can be enabled o disabled on mixer creation

{

"uri": "mixer://mixer1",

"localStreamName": "stream3",

"hasAudio": "false"

} |

or in server settings for all the streams

mixer_audio_enabled=false |

When a stream with audio or video track only is published to AV mixer, it is necessary to disable RTP activity control with the following parameters

rtp_activity_audio=false rtp_activity_video=false |

In some cases, mixer output stream bufferization is needed. This feature is enabled with the following parameter in flashphoner.properties file

mixer_out_buffer_enabled=true |

The buffer size is defined in milliseconds with parameter

mixer_out_buffer_start_size=400 |

In this case, the buffer size is 400 ms.

Stream data fetching from buffer and sending period is defined in milliseconds with parameter

mixer_out_buffer_polling_time=20 |

In this case, the period is 20 ms.

When OpenH264 codec is used for transcoding, it is possible to change bitrate of mixer output stream with the following parameter in flashphoner.properties file

mixer_video_bitrate_kbps=2000 |

By default, mixer output stream bitrate is set to 2 Mbps. If a channel bandwidth between server and viewer is not enough, bitrate can be reduced, for example

encoder_priority=OPENH264 mixer_video_bitrate_kbps=1500 |

If picture quality with default bitrate is low, or distortion occurs, it is recommended to rise mixer output stream bitrate to 3-5 Mbps

encoder_priority=OPENH264 mixer_video_bitrate_kbps=5000 |

By default, mixer output stream sound is encoded to Opus with sample rate 48 kHz. These settings may be changed using the parameters in flashphoner.properties file. For example, to use mixer output stream in SIP call the following value can be set:

audio_mixer_output_codec=pcma audio_mixer_output_sample_rate=8000 |

In this case, sound will be encoded to PCMA (alaw) with sample rate 8 kHz.

To handle mixer incoming streams, if additional bufferizing or audio and video tracks synchronizing is required for example, the custom lossless videoprocessor may be used. This feature is enabled with the following parameter in flashphoner.properties file

mixer_lossless_video_processor_enabled=true |

The maximum size of mixer buffer in milliseconds is set with this parameter

mixer_lossless_video_processor_max_mixer_buffer_size_ms=200 |

By default, maximum mixer buffer size is 200 ms. After filling this buffer, the custom lossless videoprocessor uses its own buffer and waits for mixer buffer freeing. The period of mixer buffer checking is set in milliseconds with this parameter

mixer_lossless_video_processor_wait_time_ms=20 |

By default, the mixer buffer checking period is 20 ms.

Note that using the custom lossless videoprocessor may degrade real-time performance.

When custom lossless videoprocessor is used, it is necessary to stop mixer with REST query /mixer/terminate to free all consumed resources. Mixer can be stopped also by stopping all incoming streams, in this case mixer will stop when following timeout in milliseconds expires

mixer_idle_timeout=60000 |

By default, mixer will stop after 60 seconds if there are no active incoming streams.

By default, three mixer output stream layouts are implemented:

This layout can be enabled with the following parameter in flashphoner.properties file

mixer_layout_class=com.flashphoner.media.mixer.video.presentation.GridLayout |

This layout can be enabled with the following parameter

mixer_layout_class=com.flashphoner.media.mixer.video.presentation.CenterNoPaddingGridLayout |

and works for input streams of equal resolution with the same aspect ratio only.

Since build 5.2.842, it is possible to crop participant's video around its center. That can be useful in case of video conference, when participant's face is located in the centeral part.

This layout can be enabled with the following parameter

mixer_layout_class=com.flashphoner.media.mixer.video.presentation.CropNoPaddingGridLayout |

This layout is enabled if one of mixer input streams has a name defined in the following parameter

mixer_video_layout_desktop_key_word=desktop |

By default, desktop name is used for screen sharing stream.

Since build 5.2.710 it is possible to change screen sharing picture placement with the following parameter:

mixer_desktop_align=TOP |

By default, screen sharing picture is placed above the other pictures

The following placements are supported:

| Placement | Description |

|---|---|

| TOP | Screen above |

| LEFT | Screen on left side |

| RIGHT | Sreen on right side |

| BOTTOM | Screen below |

| CENTER | Screen in center surronded by other pictures |

For example, the following parameter

mixer_desktop_align=RIGHT |

places the screen picture on the right side of mixer output stream picture

If a number of screen sharing streams are published to the same mixer, the first published stream take the main place. If the stream stops after that, the main place will be taken by the following screen share stream in alphabetical order.

This layout is added since build 5.2.852. In this case a stream that has a name defined in the following parameter

mixer_video_layout_desktop_key_word=desktop |

will be background for other streams in mixer. This layout can be enabled with the following parameter

mixer_video_desktop_fullscreen=true |

For more fine tuning of mixer layout, custom Java class should be developed to implement IVideoMixerLayout interface, for example

// Package name should be strictly defined as com.flashphoner.mixerlayout

package com.flashphoner.mixerlayout;

// Import mixer layout interface

import com.flashphoner.sdk.media.IVideoMixerLayout;

// Import YUV frame description

import com.flashphoner.sdk.media.YUVFrame;

// Import Java packages to use

import java.awt.*;

import java.util.ArrayList;

/**

* Custom mixer layout implementation example

*/

public class TestLayout implements IVideoMixerLayout {

// Pictures padding, should be even (or zero if no padding needed)

private static final int PADDING = 4;

/**

* Function to compute layout, will be called by mixer before encoding output stream picture

* This example computes grid layout

* @param yuvFrames - incoming streams raw pictures array in YUV format

* @param strings - incoming streams names array

* @param canvasWidth - mixer output picture canwas width

* @param canvasHeight - mixer output picture canwas heigth

* @return array of pictures layouts

*/

@Override

public Layout[] computeLayout(YUVFrame[] yuvFrames, String[] strings, int canvasWidth, int canvasHeight) {

// Declare picture layouts list to fill

ArrayList<IVideoMixerLayout.Layout> layout = new ArrayList<>();

// Find canvas center height

int canvasCenter = canvasHeight / 2;

// Find frame center

int frameCenter = canvasCenter - (canvasHeight / yuvFrames.length) / 2;

// Find every picture dimensions (this are the same for all the pictures because this is grid layout)

int layoutWidth = canvasWidth / yuvFrames.length - PADDING;

int layoutHeight = canvasHeight / yuvFrames.length;

// Iterate through incoming stream pictures array

// Note: streams pictures order corresponds to stream names array, use this to reorder pictures if needed

for (int c = 0; c < yuvFrames.length; c++) {

// Use Java AWT Point and Dimension

Point prevPoint = new Point();

Dimension prevDimension = new Dimension();

if (layout.size() > 0) {

// Find previous picture location to calculate next one

prevPoint.setLocation(layout.get(c - 1).getPoint());

prevDimension.setSize(layout.get(c - 1).getDimension());

}

// Set starting point of the picture

Point currentPoint = new Point((int) (prevPoint.getX() + prevDimension.getWidth() + PADDING),

frameCenter);

// Create the picture layout passing starting point, dimensions and raw picture YUV frames

layout.add(new IVideoMixerLayout.Layout(currentPoint, new Dimension(layoutWidth,

layoutHeight), yuvFrames[c]));

}

// Return pictures layouts calculated as array

return layout.toArray(new IVideoMixerLayout.Layout[layout.size()]);

}

} |

To support caption text location above or below stream picture, a special Box class should be used to calculate pictures placement since build 5.2.878, for example

// Package name should be strictly defined as com.flashphoner.mixerlayout

package com.flashphoner.mixerlayout;

// Import mixer layout interface

import com.flashphoner.media.mixer.video.presentation.BoxPosition;

import com.flashphoner.sdk.media.IVideoMixerLayout;

// Import YUV frame description

import com.flashphoner.sdk.media.YUVFrame;

// Import Box class for picture operations

import com.flashphoner.media.mixer.video.presentation.Box;

// Import MixerConfig class

import com.flashphoner.server.commons.rmi.data.impl.MixerConfig;

// Import Java packages to use

import java.awt.*;

import java.util.ArrayList;

/**

* Custom mixer layout implementation example

*/

public class TestLayout implements IVideoMixerLayout {

// Pictures padding, should be even (or zero if no padding needed)

private static final int PADDING = 4;

/**

* Function to compute layout, will be called by mixer before encoding output stream picture

* This example computes one-line grid layout

* @param yuvFrames - incoming streams raw pictures array in YUV format

* @param strings - incoming streams names array

* @param canvasWidth - mixer output picture canwas width

* @param canvasHeight - mixer output picture canwas heigth

* @return array of pictures layouts

*/

@Override

public Layout[] computeLayout(YUVFrame[] yuvFrames, String[] strings, int canvasWidth, int canvasHeight) {

// This object represents mixer canvas

Box mainBox = new Box(null, canvasWidth, canvasHeight);

// Find every picture dimensions (this are the same for all the pictures because this is grid layout)

int frameBoxWidth = canvasWidth / yuvFrames.length - PADDING;

int frameBoxHeight = canvasHeight / yuvFrames.length;

// Container to place stream pictures

Box container = new Box(mainBox, canvasWidth, frameBoxHeight);

container.setPosition(BoxPosition.CENTER);

// Iterate through incoming stream pictures array

// Note: streams pictures order corresponds to stream names array, use this to reorder pictures if needed

boolean firstFrame = true;

for (int c = 0; c < yuvFrames.length; c++) {

// Stream picture parent rectangle

// This rectangle can include stream caption if mixer_text_outside_frame setting is enabled

Box frameBox = new Box(container, frameBoxWidth, (int)container.getSize().getHeight());

frameBox.setPosition(BoxPosition.INLINE_HORIZONTAL_CENTER);

// Add padding for subsequent pictures

if (!firstFrame) {

frameBox.setPaddingLeft(PADDING);

}

firstFrame = false;

// Compute picture frame placement including stream caption text

Box frame = Box.computeBoxWithFrame(frameBox, yuvFrames[c]);

// Adjust picture to the parent rectangle

frame.fillParent();

}

// Prepare an array to return layout calculated

ArrayList<IVideoMixerLayout.Layout> layout = new ArrayList<>();

// Calculate mixer layout

mainBox.computeLayout(layout);

// Return the result

return layout.toArray(new IVideoMixerLayout.Layout[layout.size()]);

}

/**

* The function for internal use. Must be overriden.

*/

@Override

public void setConfig(MixerConfig mixerConfig) {

}

} |

Main Box class methods:

/**

* Set paddings

* @param padding padding value to set

**/

public void setPaddingLeft(int paddingLeft);

public void setPaddingRight(int paddingRight);

public void setPaddingTop(int paddingTop);

public void setPaddingBottom(int paddingBottom);

/**

* Set position

* @param position box position to set

**/

public void setPosition(BoxPosition position);

/**

* Compute location and size of this box and all of it's content

* @param layouts aggregator collection to put computed layouts to

*/

public void computeLayout(ArrayList<IVideoMixerLayout.Layout> layouts);

/**

* Compute box location inside the parent Box including caption text placement

* @param parent parent box

* @param yuvFrame frame to compute location

* @return frame with location computed

*/

public static Box computeBoxWithFrame(Box parent, YUVFrame yuvFrame);

/**

* Scale box to the size closest to size of the parent, preserving aspect ratio

*/

public void fillParent();

/**

* Sets box size without saving aspect ratio, crops image from center

*/

public void fillParentNoScale(); |

Possible positions to place Box object:

public enum BoxPosition {

//attach box to the left

LEFT,

//attach box to the right

RIGHT,

//attach box to the top

TOP,

//attach box to the bottom

BOTTOM,

//center box in the parent box

CENTER,

//bottom and center box in the parent box

BOTTOM_CENTER,

//top and center box in the parent box

TOP_CENTER,

//attach box to the right-top corner of left adjoining box (in the same parent box)

INLINE_HORIZONTAL,

//same as INLINE_HORIZONTAL but additionally adds vertical CENTER

INLINE_HORIZONTAL_CENTER,

//attach box to the left-bottom corner of upper adjoining box (in the same parent box)

INLINE_VERTICAL,

//same as INLINE_VERTICAL but additionally adds horizontal CENTER

INLINE_VERTICAL_CENTER

} |

Then the class should be complied into byte code. To do this, create folder tree accordind to TestLayout class package name

mkdir -p com/flashphoner/mixerlayout |

and execute the command

javac -cp /usr/local/FlashphonerWebCallServer/lib/wcs-core.jar ./com/flashphoner/mixerlayout/TestLayout.java |

Now, pack the code compiled to jar file

jar -cf testlayout.jar ./com/flashphoner/mixerlayout/TestLayout.class |

and copy this file to WCS libraries folder

cp testlayout.jar /usr/local/FlashphonerWebCallServer/lib |

To use custom mixer layout class, set it to the following parameter in flashphoner.properties file

mixer_layout_class=com.flashphoner.mixerlayout.TestLayout |

and restart WCS.

With this custom layout, mixer output stream for three input streams will look like:

Since build 5.2.693 mixer layout can be defined when creating mixer with REST API, for example

{

"uri": "mixer://mixer1",

"localStreamName": "mixer1",

"mixerLayoutClass": "com.flashphoner.mixerlayout.TestLayout"

} |

Then, layout can be defib=ned for every mixer separately

Some browsers do not support playback for H264 streams encoded by certain profiles. To solve it, the following parameter is added since build 5.2.414 to set mixer output stream encoding profile

mixer_video_profile_level=42c02a |

By default, constrainted baseline level 4.2 profile is set.

Since build 5.2.693 mixer backgroung can be defined and watermark can be added when creating mixer with REST API, for example

{

"uri": "mixer://mixer1",

"localStreamName": "mixer1",

"watermark": "watermark.png",

"background": "background.png"

} |

By default, files should be placed to /usr/local/FlashphonerWebCallServer/conf folder. Full path to the files can also be set, for example

{

"uri": "mixer://mixer1",

"localStreamName": "mixer1",

"watermark": "/opt/media/watermark.png",

"background": "/opt/media/background.png"

} |

By default, mixer converts stereo audio from incoming streams to mono to reduce the amiunt of processed data. This allows to minimize a possible delay while using realtime mixer in video conferencing case.

Mixer can be switched to stereo sound processing if necessary, for example, in case of online music radio. Since build 5.2.922 it is possible to set mixer audio channels count usig the following parameter

audio_mixer_output_channels=2 |

Mixer MCU support for audio can be enabled with the following parameter

mixer_mcu_audio=true |

In this case for three input streams stream1, stream2, stream3 the following output streams will be generated in mixer mixer1:

| Output stream name | stream1 | stream2 | stream3 | |||

|---|---|---|---|---|---|---|

| audio | video | audio | video | audio | video | |

| mixer1 | + | + | + | + | + | + |

| mixer1-stream1 | - | - | + | - | + | - |

| mixer1-stream2 | + | - | - | - | + | - |

| mixer1-stream3 | + | - | + | - | - | - |

Thus, each of the additional streams contains audio of all streams in the mixer, except for one. This allows for example to eliminate echo for conference participants.

This feature can be also enabled for video with the following parameter

mixer_mcu_video=true |

In this case for three input streams stream1, stream2, stream3 the following output streams will be generated in mixer mixer1:

| Output stream name | stream1 | stream2 | stream3 | |||

|---|---|---|---|---|---|---|

| audio | video | audio | video | audio | video | |

| mixer1 | + | + | + | + | + | + |

| mixer1-stream1 | - | - | + | + | + | + |

| mixer1-stream2 | + | + | - | - | + | + |

| mixer1-stream3 | + | + | + | + | - | - |

Thus, each of the additional streams contains audio and video of all streams in the mixer, except for one. This allows to arrange a full-fledged chat room based on mixer.

In this case, if mixer recording is enabled with the parameter

record_mixer_streams=true |

the main mixer output stream will be only recorded (mixer1 in the example above).

If video MCU support is enables, channel losses can affect mixer output stream quality. Custom lossless videoprocessor can be used to improve quality, that can make an additional latency.

Since build 5.2.835 it is possible to change audio level and mute video for mixer incoming streams. In this case, the original stream remains unchanged. Video track can be muted (black screen) and then unmuted. For audio track, volume level can be set in percent up to 100, or sound can be muted by setting level to 0.

Incoming streams are managed using REST API query /mixer/setAudioVideo.

For example, create a mixer and add 3 streams to it: 2 participants and 1 speaker

curl -H "Content-Type: application/json" -X POST http://localhost:8081/rest-api/mixer/startup -d '{"uri": "mixer://m1", "localStreamName":"m1"}'

curl -H "Content-Type: application/json" -X POST http://localhost:8081/rest-api/mixer/add -d '{"uri": "mixer://m1", "remoteStreamName": "stream1"}'

curl -H "Content-Type: application/json" -X POST http://localhost:8081/rest-api/mixer/add -d '{"uri": "mixer://m1", "remoteStreamName": "stream2"}'

curl -H "Content-Type: application/json" -X POST http://localhost:8081/rest-api/mixer/add -d '{"uri": "mixer://m1", "remoteStreamName": "desktop"}' |

Mute all participants excluding speaker

POST /rest-api/mixer/setAudioVideo HTTP/1.1

User-Agent: curl/7.29.0

Host: localhost:8081

Accept: */*

Content-Type: application/json

Content-Length: 62

{

"uri": "mixer://m1",

"streams": "^stream.*",

"audioLevel": 0

} |

REST query streams parameter may be set either as regular expression to match streams by name, or as streams list. Use regular expression syntax supported by Java, the examples can be found here.

Mute stream1 video

POST /rest-api/mixer/setAudioVideo HTTP/1.1

User-Agent: curl/7.29.0

Host: localhost:8081

Accept: */*

Content-Type: application/json

Content-Length: 65

{

"uri": "mixer://m1",

"streams": ["stream1"],

"videoMuted": true

} |

Check streams state by /mixer/find_all query

|

|

1. For this test we use:

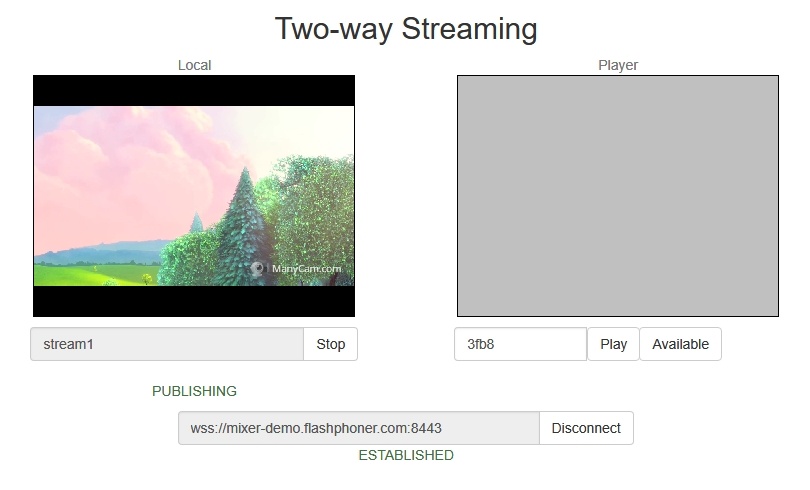

2. Open the page of the Two Way Streaming application. Publish the stream named stream1:

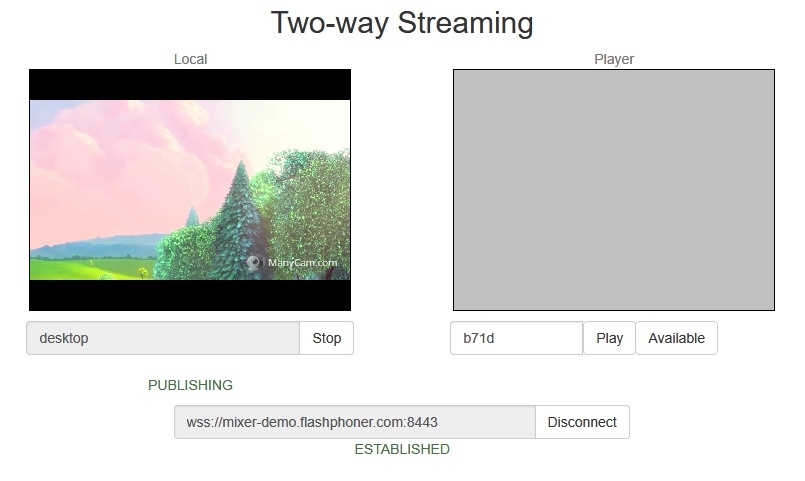

3. In another tab open the page of the Two Way Streaming application. Publish the stream named desktop:

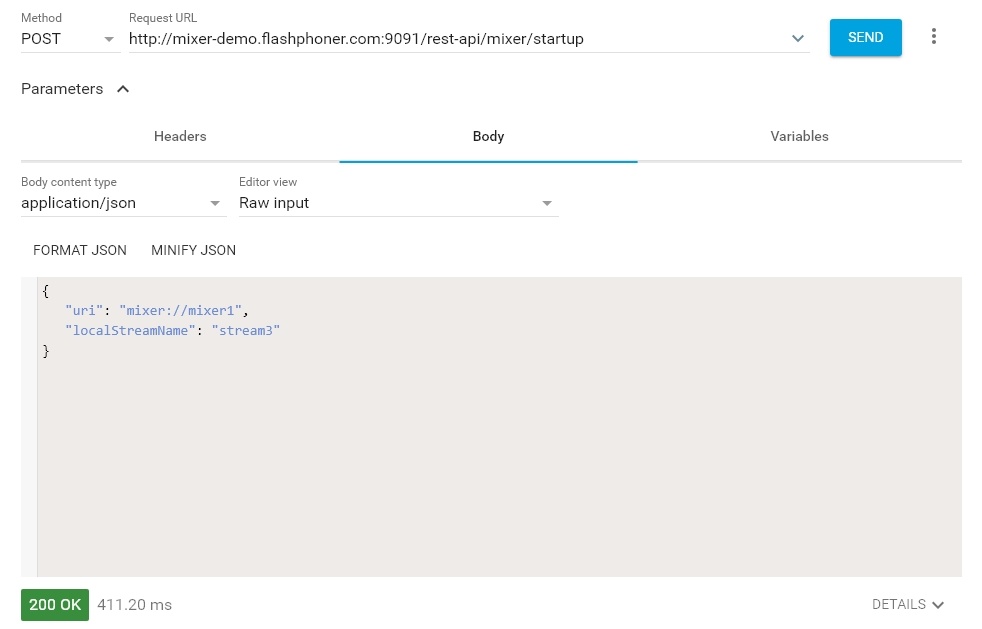

4. Open the REST client. Send the /mixer/startup query and specify the URI of the mixer mixer://mixer1 and the output stream name stream3 in its parameters:

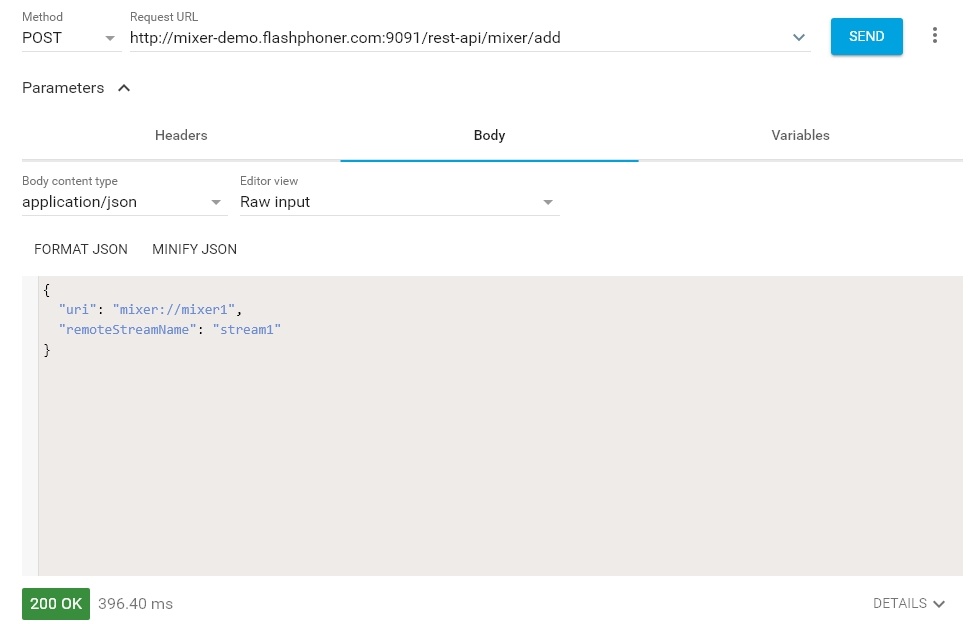

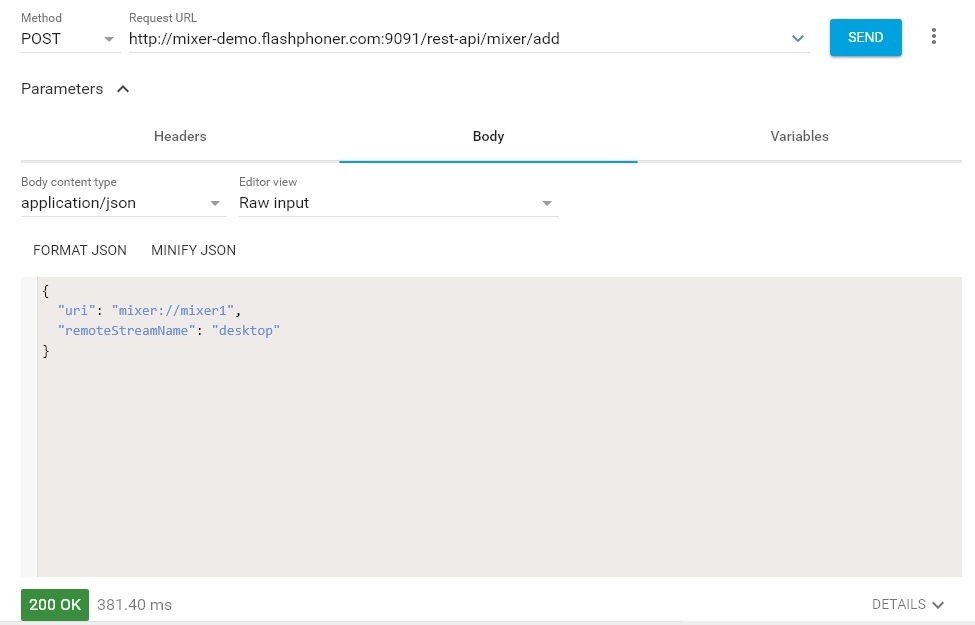

5. Send the /mixer/add query and specify the URI of the mixer mixer://mixer1 and the input stream name stream1 in its parameters:

6. Open the Player web application, specify the name of the output stream of the mixer stream3 in the Stream field and click Start:

7. Send /mixer/add and specify the URI of the mixer mixer://mixer1 and the input stream name desktop in its parameters:

8. In the output stream of the mixer you should see the desktop stream that imitates screen sharing and the stream stream1:

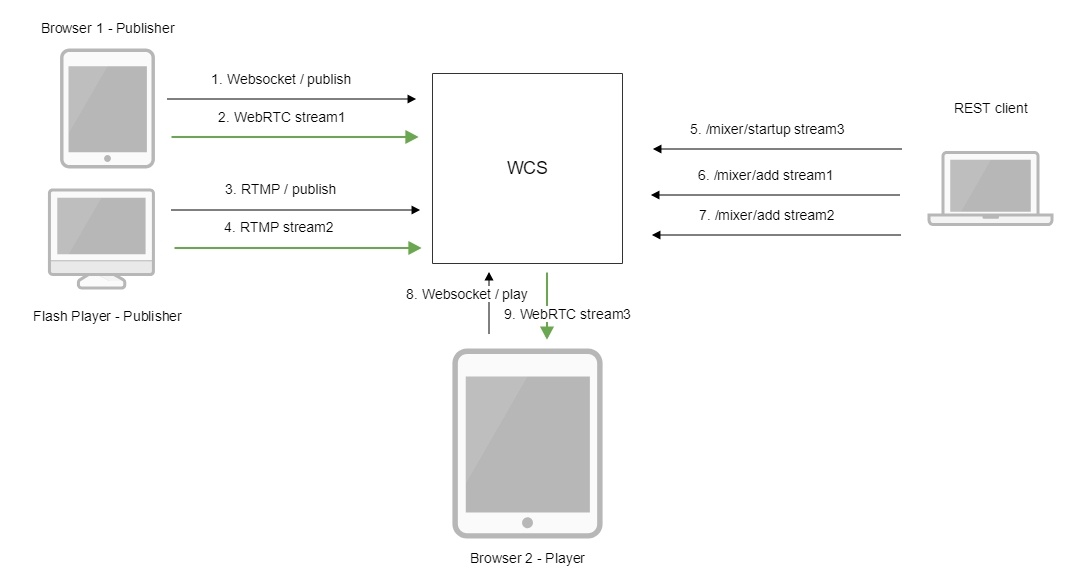

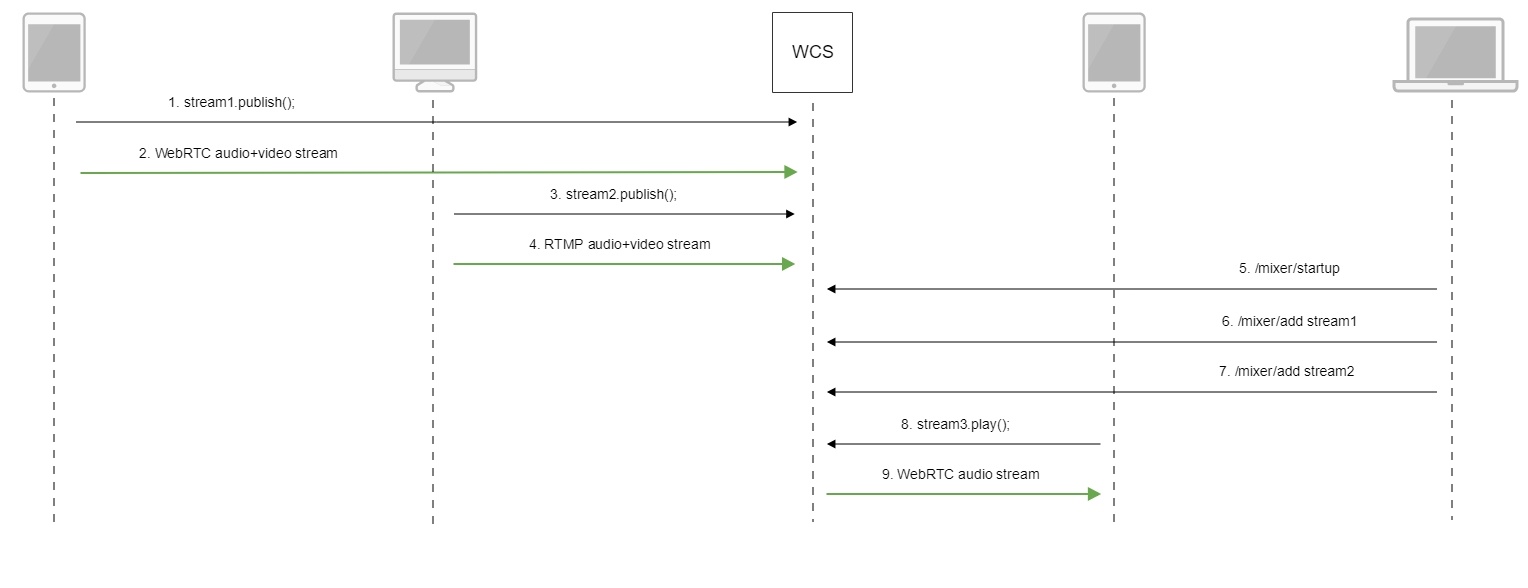

Below is the call flow when using the mixer.

1. Publishing of the WebRTC stream stream1

2. Sending the WebRTC stream to the server

3. Publishing the RTMP stream stream2

4. Sending the RTMP stream to the server

5. Sending the /mixer/startup query to create the mixer://stream3 mixer with the output stream3

http://demo.flashphoner.com:9091/rest-api/mixer/startup

{

"uri": "mixer://stream3",

"localStreamName": "stream3"

} |

6. Sending the /mixer/add query to add stream1 to the mixer://stream3 mixer

http://demo.flashphoner.com:9091/rest-api/mixer/add

{

"uri": "mixer://stream3",

"localStreamName": "stream3"

"remoteStreamName": "stream1"

} |

7. Sending the /mixer/add query to add stream2 to the mixer://stream3 mixer

http://demo.flashphoner.com:9091/rest-api/mixer/add

{

"uri": "mixer://stream3",

"localStreamName": "stream3"

"remoteStreamName": "stream2"

} |

8. Playing the WebRTC stream stream3

9. Sending the WebRTC audio stream to the client

By default, all the standard REST hooks are invoked for mixer stream. The /connect REST hook is invoked while creating a mixer.

A separate server application can be created for mixer REST hooks handling if necessary. To send REST hooks to this application, the following parameter can be used since build 5.2.634

mixer_app_name=defaultApp |

By default, mixer REST hooks are handled by defaultApp like another streams REST hooks.

1. A mixer is not created is the name of the mixer contains symbols restricted for URI.

Symptoms: a mixer with the name like test_mixer does not create.

Solution: do not use disallowed symbols in the name of a mixer or a stream, especially if automatic mixer creation option is enabled. For instance, the name

user_1#my_room |

cannot be used.

If streams of chat rooms are mixed, room names also cannot use restricted symbols.

2. Mixer output stream will be empty if transcoding is enabled on server on demand only.

Symptoms: video streams mixer created successfully, but black screen is played in mixer output stream.

Solution: for stream mixer to work transcoding should be enabled on server with the following parameter in flashphoner.properties file

streaming_video_decoder_fast_start=true |

3. The same stream cannot be added to two or more mixers if non-realtime mixer is used

Symptoms: when the stream is added to second mixer, the first mixer output stream stops

Solution: do not use the same stream in more than one mixer