In an iOS mobile application via WebRTC¶

Overview¶

WCS provides SDK to develop client applications for the iOS platform.

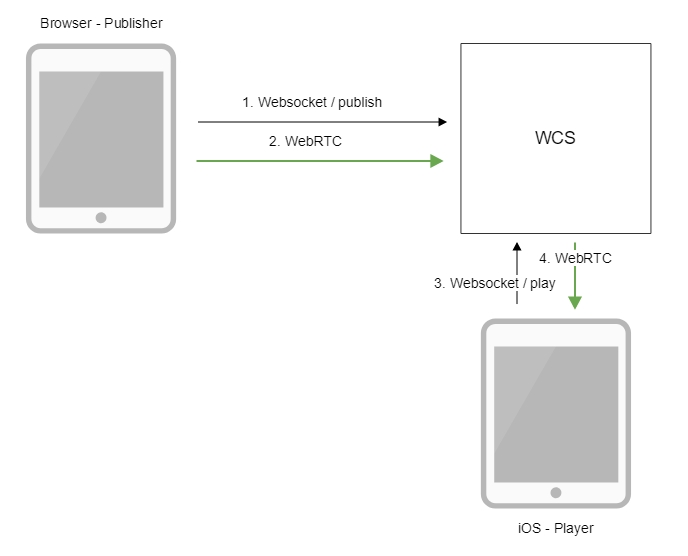

Operation flowchart¶

- The browser connects to the server via the Websocket protocol and sends the

publishStreamcommand. - The browser captures the microphone and the camera and sends the WebRTC stream to the server.

- The iOS device connects to the server via the Websocket protocol and sends the

playStreamcommand. - The iOS device receives the WebRTC stream from the server and plays it in the application.

Quick manual on testing¶

- For the test we use:

- the demo server at

demo.flashphoner.com; - the Two Way Streaming web application to publish the stream;

-

the iOS mobile application from AppStore to play the stream.

-

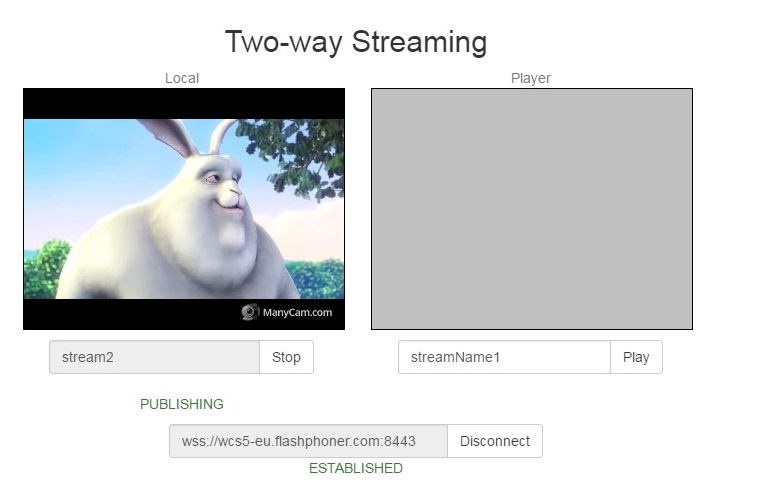

Open the Two Way Streaming web application. Click

Connect, thenPublish. Copy the identifier of the stream:

-

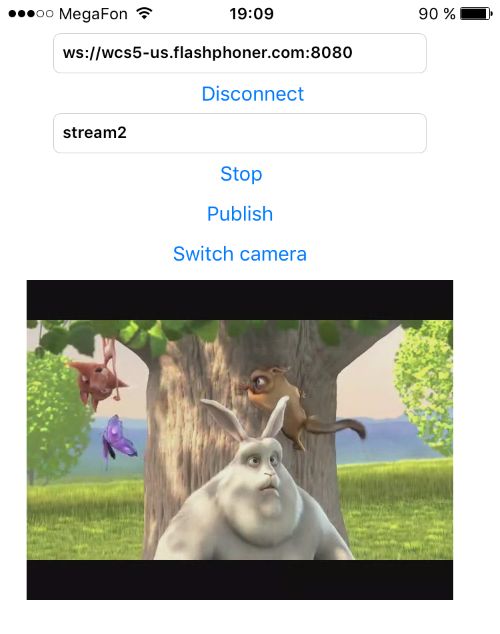

Install the iOS mobile application from AppStore. Run the application. Enter the URL of the WCS server and the name of the published stream, then click

Play. The stream starts playing from the server:

Call flow¶

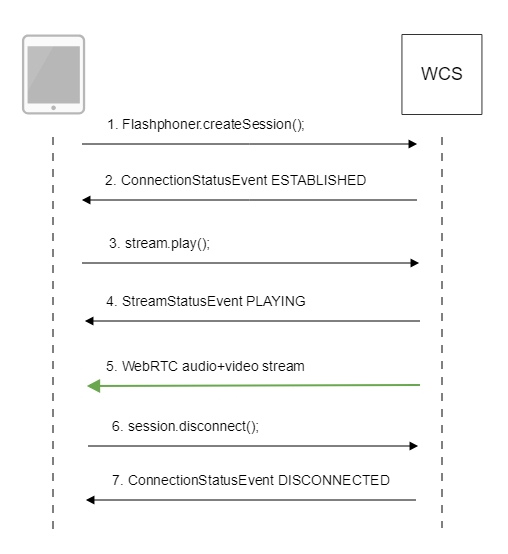

Below is the call flow when using the Player example.

-

Establishing a connection to the server

FPWCSApi2.createSession()code

-

Receiving from the server an event confirming successful connection

FPWCSApi2Session.on:kFPWCSSessionStatusEstablished callbackcode

-

Playing the stream

FPWCSApi2Session.createStream()code

- (FPWCSApi2Stream *)playStream { FPWCSApi2Session *session = [FPWCSApi2 getSessions][0]; FPWCSApi2StreamOptions *options = [[FPWCSApi2StreamOptions alloc] init]; options.name = _remoteStreamName.text; options.display = _remoteDisplay; NSError *error; FPWCSApi2Stream *stream = [session createStream:options error:nil]; if (!stream) { ... return nil; } } -

Receiving from the server an event confirming successful playing of the stream

FPWCSApi2Stream.on:kFPWCSStreamStatusPlaying callbackcode

-

Receiving the audio and video stream via WebRTC

-

Stopping the playback of the stream

FPWCSApi2Session.disconnect()code

if ([button.titleLabel.text isEqualToString:@"STOP"]) { if ([FPWCSApi2 getSessions].count) { FPWCSApi2Session *session = [FPWCSApi2 getSessions][0]; NSLog(@"Disconnect session with server %@", [session getServerUrl]); [session disconnect]; } else { NSLog(@"Nothing to disconnect"); [self onDisconnected]; } ... } -

Receiving from the server an event confirming the playback of the stream is stopped

FPWCSApi2Session.on:kFPWCSSessionStatusDisconnected callbackcode